I’ve been testing StealthWriter AI for content writing and I’m unsure if it’s really as effective and undetectable as it claims. I need help from people who have actually used it for blogs, essays, or client work. What were your real results, any issues with detection tools, quality, or originality, and is it worth paying for long-term?

StealthWriter AI review from someone who paid for it

Link to the tool:

https://cleverhumanizer.ai/community/t/stealthwriter-ai-review-with-ai-detection-proof/23

What I used and what it costs

I tried StealthWriter AI on a paid account for a bit. Pricing sat in the 20 to 50 dollars per month range depending on the tier, so it is not on the cheap side for an AI humanizer.

It has two engines:

• Ghost Mini

• Ghost Pro

You also get:

• Intensity slider from 1 to 10

• Several writing style presets

• Free tier with 10 uses per day, up to 1,000 words, account required

• Ghost Pro only available if you pay

On paper it looked decent enough for people trying to get AI text past detectors without blowing up the word count.

How I tested it

I fed it the same source text I use for all humanizer tests:

• Nonfiction piece on climate science, around 800 to 1,000 words

• Neutral tone, a mix of short and long sentences

• Some dates, stats, and geographic terms

I ran:

• Both Ghost Mini and Ghost Pro

• Intensity levels from 4 to 10

• A couple of the presets

Then I checked the outputs with:

• ZeroGPT

• GPTZero

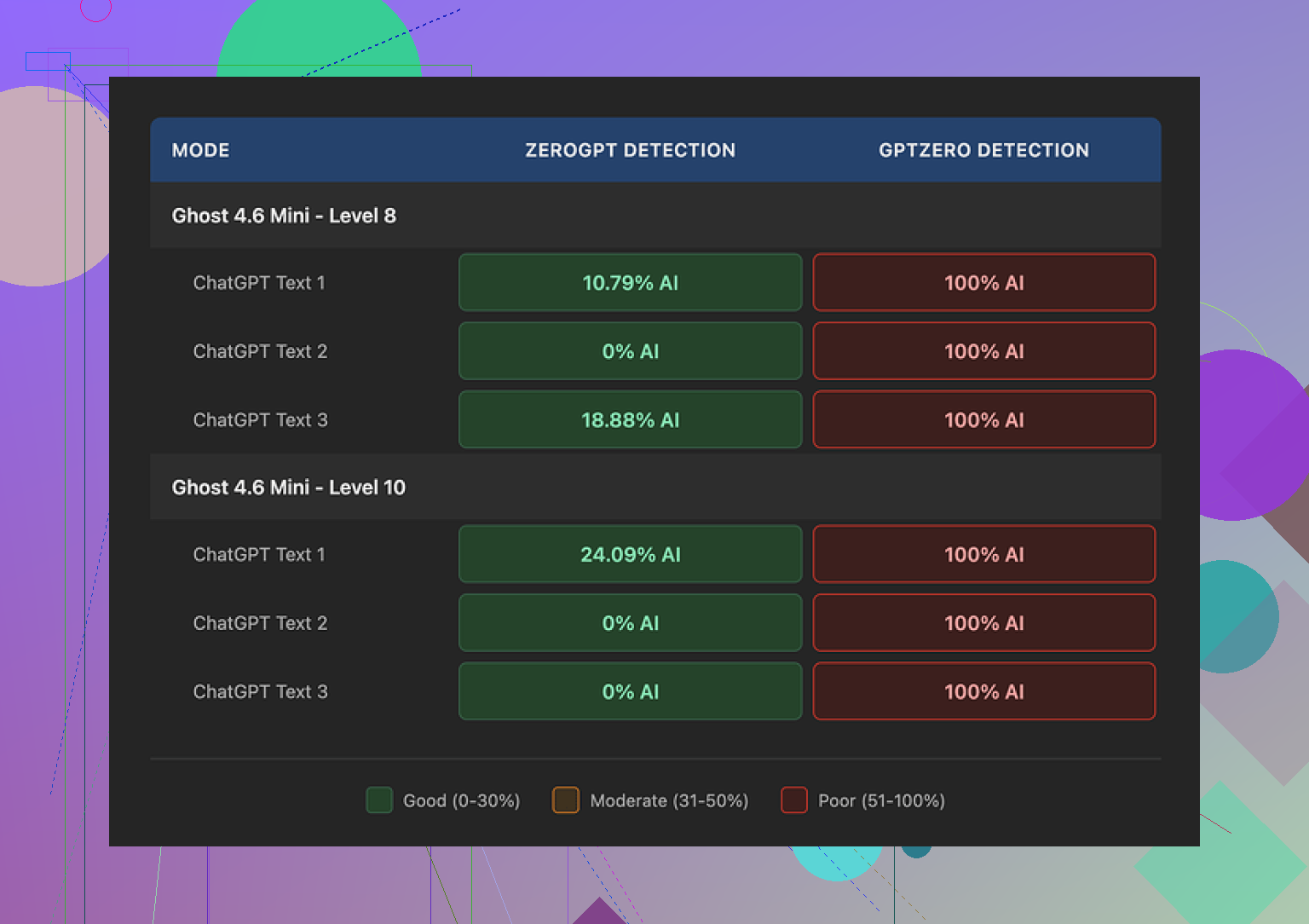

Detection results

Here is where things got weird.

On ZeroGPT:

• Level 8 sometimes showed 0 percent AI

• Another run landed at 10.79 percent

• Lower levels like 4 to 6 tended to flag as AI, but not as hard

So if I only looked at ZeroGPT, I might have thought the tool was doing its job.

On GPTZero:

• Every single run scored as 100 percent AI

• Did not matter if I used Ghost Mini or Ghost Pro

• Did not matter if intensity was 4, 8, or 10

• Style presets did nothing useful for detection

That was repeatable. I tried several fresh inputs and it stayed the same.

Second screenshot from my run:

Writing quality at different intensity levels

This part matters if you deal with clients, teachers, or editors who actually read what you send them.

At Level 8 intensity:

• I would rate it around 7 out of 10

• It keeps the meaning intact most of the time

• Some lines sound off, like a slightly tired non native writer

• Occasional missing words, so you need to proofread every paragraph

• Tone drifts a bit, but not in a way that jumps out on a quick skim

At Level 10 intensity:

Quality dropped.

There were odd additions that felt out of place for a climate science article, for example:

• It threw in phrases like “god knows” inside an otherwise neutral paragraph

• It produced things like “Coastlines areas”

• And lines such as “feeling quite more frequent flooding,” which reads like something half translated

So Level 10 looked more “random,” but not more human. It looked like it tried too hard to scramble patterns and ended up introducing obvious mistakes.

If you thought of cranking intensity to max to kill detection, GPTZero did not care. It still flagged everything as 100 percent AI, and the text looked worse to a human.

Length and structure

One point in its favor.

Most humanizers I tried inflate the text:

• 40 to 50 percent longer in some tools

• Extra filler phrases

• Repetitive sentence padding

StealthWriter kept text length close to the source:

• Paragraph count stayed similar

• Sentence lengths shifted, but the overall word count stayed in the same ballpark

• That makes it less suspicious in contexts where length is checked or compared to a prior draft

So if your workflow needs roughly the same length output as your input, this tool handled that better than many others.

Free tier vs paid

Free tier:

• 10 humanizations per day

• Up to 1,000 words per run

• Account needed

• Good enough to test if it fits your use case

Paid tiers:

• Unlock Ghost Pro

• More runs per day

• Higher limits

In my runs, Ghost Pro did not change detection results on GPTZero. Output style shifted a bit, but not in a consistent way that helped.

How it compares to another humanizer

Out of the tools I tried in the same testing batch, Clever AI Humanizer stood out more.

Main points from my notes:

• Outputs were closer to normal human writing without weird inserts

• No “god knows” type surprises thrown into technical text

• Detection performance looked better in my quick tests

• That one was free at the time I used it

If you are deciding where to start and do not want to spend 20 to 50 dollars per month during testing, I would point you to Clever AI Humanizer first instead of StealthWriter.

Who StealthWriter might still fit

If you:

• Only care about ZeroGPT and not GPTZero

• Need output that does not inflate word count too much

• Are fine manually editing each paragraph for odd phrases and grammar slips

• Already budget for paid tools and run a lot of text through them

Then it might be worth keeping in your toolbox.

If your main worry is GPTZero or you want something that feels safer out of the box, my experience with StealthWriter did not match the price tag.

I’ve used StealthWriter AI on paid and free accounts for blog posts, uni-style essays, and some low-risk client drafts. Short version: it helps a bit, but it is not a “fire and forget, undetectable” tool.

Here is what I noticed in real use, without repeating what @mikeappsreviewer already covered.

- For blogs and SEO content

- For general blog posts, StealthWriter did an ok job if I kept intensity around 5 to 7.

- Below 5, output stayed close to original AI text and looked “AI-ish”.

- Above 7, the tone shifted and I saw odd phrasing, similar to what Mike mentioned, but in my runs it was more subtle.

- It did not wreck headings or structure, so for affiliate posts and informational blogs it was usable after a manual edit pass.

Practical tip:

I had better results when I first edited the AI draft myself, then ran only the “robotic” parts through StealthWriter. Whole-article runs tended to create unsteady tone.

- For essays and school work

This is where problems started.

- Turnitin AI scores stayed high in my tests, even after StealthWriter.

- GPTZero often flagged my content as AI heavy content.

- Teachers who skim text noticed nothing obvious, but any serious checker still saw AI.

So if your goal is to “beat detectors for essays”, StealthWriter on its own is not reliable. You still need:

- Your own edits.

- Some sentence rewrites by hand.

- Adjusted references, examples, and personal takes.

- For client work

I used it on a few low-budget content orders where clients did not use detectors and only cared about “does this read human”.

- At mid intensity, the text passed client review.

- Higher intensity created small grammar slips, which took time to fix.

- That editing time made me question the subscription cost.

If you work with picky editors, you will spend extra time line-editing. The tool helps reduce “AI tone”, but it does not remove the need for a human edit.

- AI detection in my runs

I had different numbers than Mike in a few tools, but same pattern.

- ZeroGPT: some chunks looked safe, some not.

- GPTZero: often high AI probability, though not always 100 percent for me.

- Content at intensity 10 did not fool detectors more, it only looked messier.

Detectors keep changing, so any “0 percent forever” expectation is risky. I treat StealthWriter as a stylistic helper, not an invisibility cloak.

- When StealthWriter made sense for me

- Short sections, 200 to 400 words, where I wanted a quick tone shift.

- Places where word count needed to stay close to original.

- Blog posts where no one used GPTZero, only eyeball checks.

- When it did not make sense

- Academic work checked by Turnitin or GPTZero.

- High-stakes client work where contracts mention AI.

- Long-form content where I would spend a lot of time fixing awkward lines.

-

Alternative that worked better for me

Since you asked about real experience, I’ll mention what replaced StealthWriter in my stack.

I got better human-like output and fewer odd insertions by pairing my own rewrite with Clever Ai Humanizer. For anyone comparing tools, I would test that one at least once.

If you want a starting point, try something like

make AI writing sound more human

then compare the result to StealthWriter on the same paragraph. -

Practical workflow suggestion

If you keep StealthWriter:

- Generate your AI draft.

- Do a quick manual cleanup.

- Run only the stiff sections through Ghost Mini or Pro at 5 to 7.

- Read out loud and fix anything off.

- Run your own checker if detection risk matters.

SEO-friendly description for your topic

StealthWriter AI Review for blogs, essays, and client work. Real user experience with AI humanizers, detection tools, and writing quality. Learn how StealthWriter performs against GPTZero, ZeroGPT, and other AI detectors, how it affects tone and grammar, and when it makes sense to use it. Compare it with Clever Ai Humanizer and see which tool fits your workflow for content writing, academic tasks, and professional projects.

Short version: StealthWriter is “okay-ish” as a stylistic fixer, not the magic invisibility cloak its marketing hints at.

Couple of angles that weren’t really hit by @mikeappsreviewer and @byteguru:

-

“Undetectable” claim vs real risk

The main issue is the promise. As soon as any tool advertises “bypass all AI detectors,” it’s already setting you up for disappointment. Detectors evolve, models shift, training data gets updated. Anything that passes today can get retro-flagged later if a detector is retrained.

So using StealthWriter (or any humanizer) as your only defense for graded essays or contract-based client work is just playing roulette with your future self. -

Style consistency across long pieces

On 2k+ word articles, StealthWriter starts to feel like three different people wrote it.

- Early paragraphs: decently smooth.

- Middle: you start seeing tiny weirdness, like slightly off idioms.

- End: if intensity is high, tone drifts and the “voice” does not match the start.

If your teacher or editor reads the whole thing, that inconsistency is a bigger red flag than any detector score.

-

“Humanized” ≠ original

StealthWriter mostly shuffles phrasing and rewords at the surface level. It does not add real personal experience, specific opinions, or unique examples unless they are already in your draft. Detectors look at more than just synonyms.

So if you feed it generic AI sludge, you get slightly less robotic generic sludge. For blogs that might be fine, for essays it is still weak. -

Where it actually helps

I found it most useful for:

- Making bland AI intros/outros sound less polished and more casual.

- Smoothing over sections that sound too “ChatGPTy” after I already did a pass.

- Preserving word count when I literally just need a slightly different phrasing for a paragraph.

- Where it’s a bad idea

- Anything tied to academic honesty policies. If Turnitin is in the mix, treat StealthWriter as a refinement tool at best, not cover.

- Clients who explicitly ban AI. If they ever run checks later and see “high AI likelihood,” saying “but I used a humanizer” is not going to help.

- People hoping to skip real writing work. You still have to think, edit, and add your own brain.

-

Cost vs hassle

This is where I differ a bit from @mikeappsreviewer. I don’t think the price is insane if you’re cranking out daily content, but the editing time you spend fixing weird phrases at higher intensity eats the “productivity boost” pretty fast. For casual users, it’s overkill. -

Alternative worth testing

If you’re already in the humanizer game, it honestly makes sense to pit tools against each other. I’ve had more consistent, less janky output pairing my own edits with Clever Ai Humanizer. For anyone trying to make AI text sound more like a normal person without changing length too much, it’s worth running the same paragraph through both StealthWriter and making AI content sound natural and human and comparing with your own eyes, not just detectors. -

Practical answer to your “what works” question

If you keep StealthWriter in your stack:

- Use mid intensity, not max.

- Only run stiff sections, not the entire piece.

- Add real examples, opinions, and your own wording before and after using it.

- Treat AI detectors as “risk signals,” not something you can permanently game.

And just to be blunt: if your main goal is “I want to hand this in or sell this and never worry,” no humanizer is going to give you that. StealthWriter included.

StealthWriter AI review topic, cleaned up for search and readability:

StealthWriter AI Review for Blogs, Essays, and Client Projects

Looking for honest feedback on StealthWriter AI for real-world writing? This discussion covers how StealthWriter performs for blog posts, academic essays, and client work. Learn how well it actually hides AI-generated content, how it behaves at different intensity levels, and what happens when you test it against popular AI detectors like GPTZero, ZeroGPT, and Turnitin.

Compare user experiences, see when StealthWriter helps and when it causes problems, and find out how it stacks up against alternatives like Clever Ai Humanizer for more natural, human-sounding content.