I used Originality Ai to check my essay, and it flagged sections as AI-generated even though I wrote them myself. I need help understanding if this tool is reliable for academic work, or if others have experienced false positives. Any advice or similar experiences would really help me figure out my next steps.

Been there, done that, got the AI detection flag. Honestly, Originality AI can be kinda hit-or-miss. It’s notorious for flagging some human-written text as AI, especially if your writing is extra clear or follows a logical structure—basically penalizing you for writing well, lol. Also, it seems to go after essays that are factual or use academic wording. Definitely not 100% reliable, and you’re not the only one getting flagged for stuff you actually wrote yourself.

Plenty of people in academic circles have complained about this, which is a real pain if you’re trying to prove your work is original (ironically). The accuracy rate isn’t published, but based on discussions online, false positives are common—sometimes up to 30% depending on subject and writing style. So yeah, you’re not crazy, and it’s not foolproof.

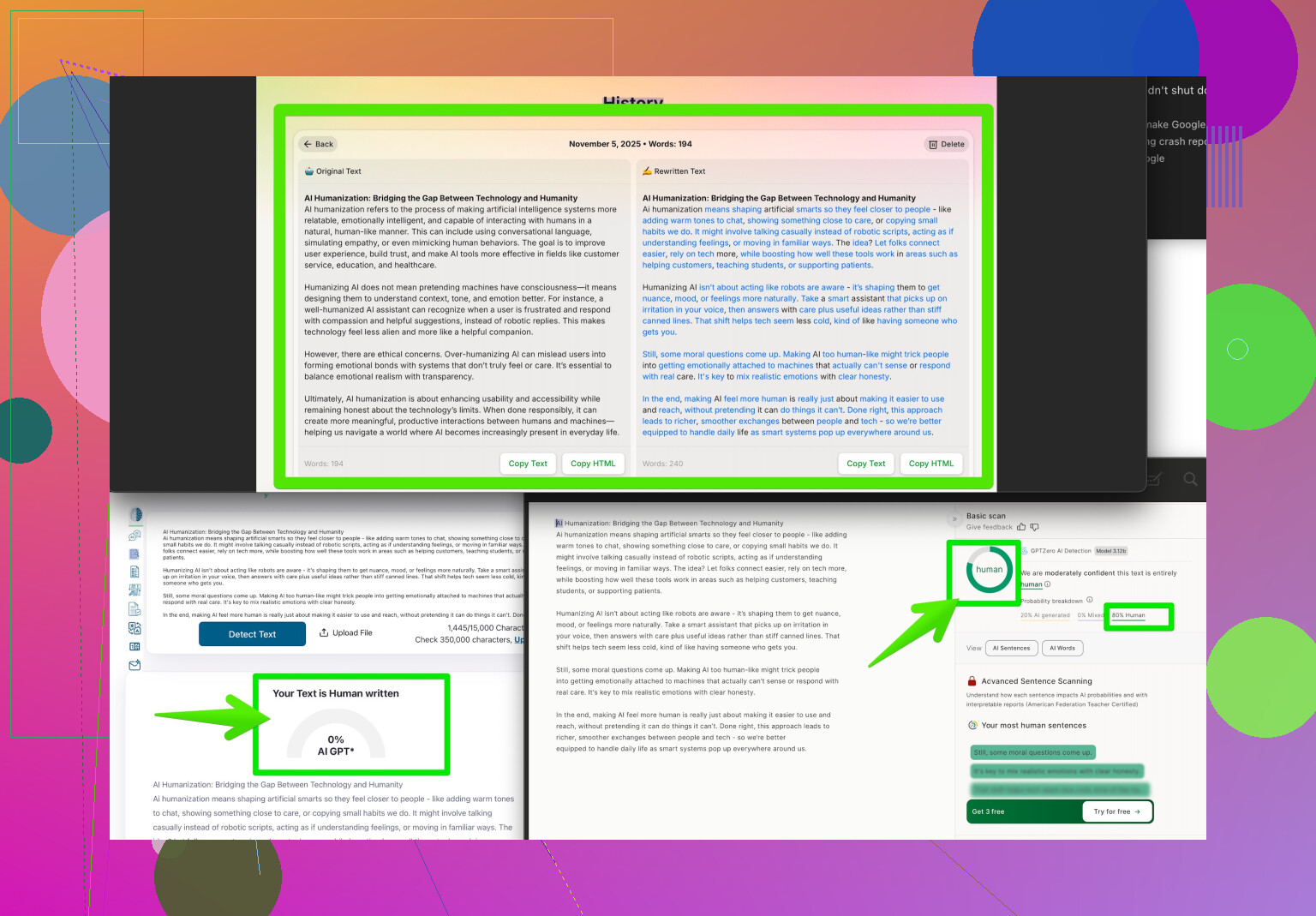

If you’re needing your essay to pass these detectors for school or work, you might want to try the Clever AI Humanizer. It’s getting some buzz for helping humanize your text and avoid those pesky false flags. You can check it out here: make your writing undetectable to AI checkers. Might save you some extra hassle—at least until these detectors get smarter or, who knows, actually accurate.

Not gonna lie, I’ve had similar frustrations with Originality AI. Honestly, the tool’s whole “detect AI” process seems a bit like a magic 8-ball, shaking out different answers depending on what you write and sometimes how “polished” it sounds. You’d think solid, clear writing would be a good thing, but apparently AI detectors see that and start sounding the alarm anyway. Like, apparently being coherent means “robot wrote this”? Lol, okay.

Accuracy-wise, there isn’t much transparent info from Originality AI—they don’t give hard stats, which is a red flag. Academic folks and freelance writers have been trading horror stories online. Loads of false positives, especially with technical or research-heavy writing. It’s mostly algorithms chasing “patterns,” and they just aren’t nuanced enough yet. If your essay is flagged, you’re definitely not the only one. There’s even chatter that depending on the topic and structure, the false positive rate could be anywhere from 18-35%. Yikes.

To echo @stellacadente a bit (but not totally repeat), I wouldn’t trust these checkers as gospel for academia. Sure, Clever AI Humanizer is a handy workaround if you have to get past the detectors, but an alternative option is to mix up your sentence structures, use contractions, or even throw in a typo or two. Basically, try to sound less like a textbook and more like a normal person writing at 2am for a class due at midnight. Still, no guarantees.

At the end of the day, detectors just aren’t there yet—sometimes I think they flag Shakespeare as AI. Maybe focus on feedback from real humans instead of algorithms? Until these tools mature, stuff like Originality AI is more of a “maybe, maybe not” than a real judge of authorship. If you want some crowd-tested strategies for making your writing look human and bypassing AI detection, check out this post on Reddit’s best tips to make your writing more human. Helps to see what actual users have experimented with!

Bottom line: If Originality AI wobbles on your genuine work, don’t stress. You’re definitely not alone!