I’m exploring AI tools that make generated content sound more human, and I came across one that claims to be a “clever AI humanizer” built around real user feedback. I’m not sure how effective it is, whether it’s safe to use for blogs and social media, or if it causes issues with AI detectors or SEO. Could anyone who has real experience with this kind of tool share what worked, what didn’t, and what to watch out for?

Clever AI Humanizer: Actual User Take, Not Sponsored Nonsense

I’ve been messing around with a bunch of “AI humanizer” tools lately, and I’ll start with the one everyone keeps name-dropping: Clever AI Humanizer.

Site is here (this is the real one, not a clone):

https://aihumanizer.net/

No tracking link, no affiliate stuff, just the plain URL.

Quick heads up about the legit site

A few people DM’d me asking, “Which one is the real Clever AI Humanizer?” because Google is full of “Clever-ish” humanizers buying ads on the same keyword. Some of those redirect you into subscriptions, paywalls, or fake “lifetime” deals.

What I’ve seen so far:

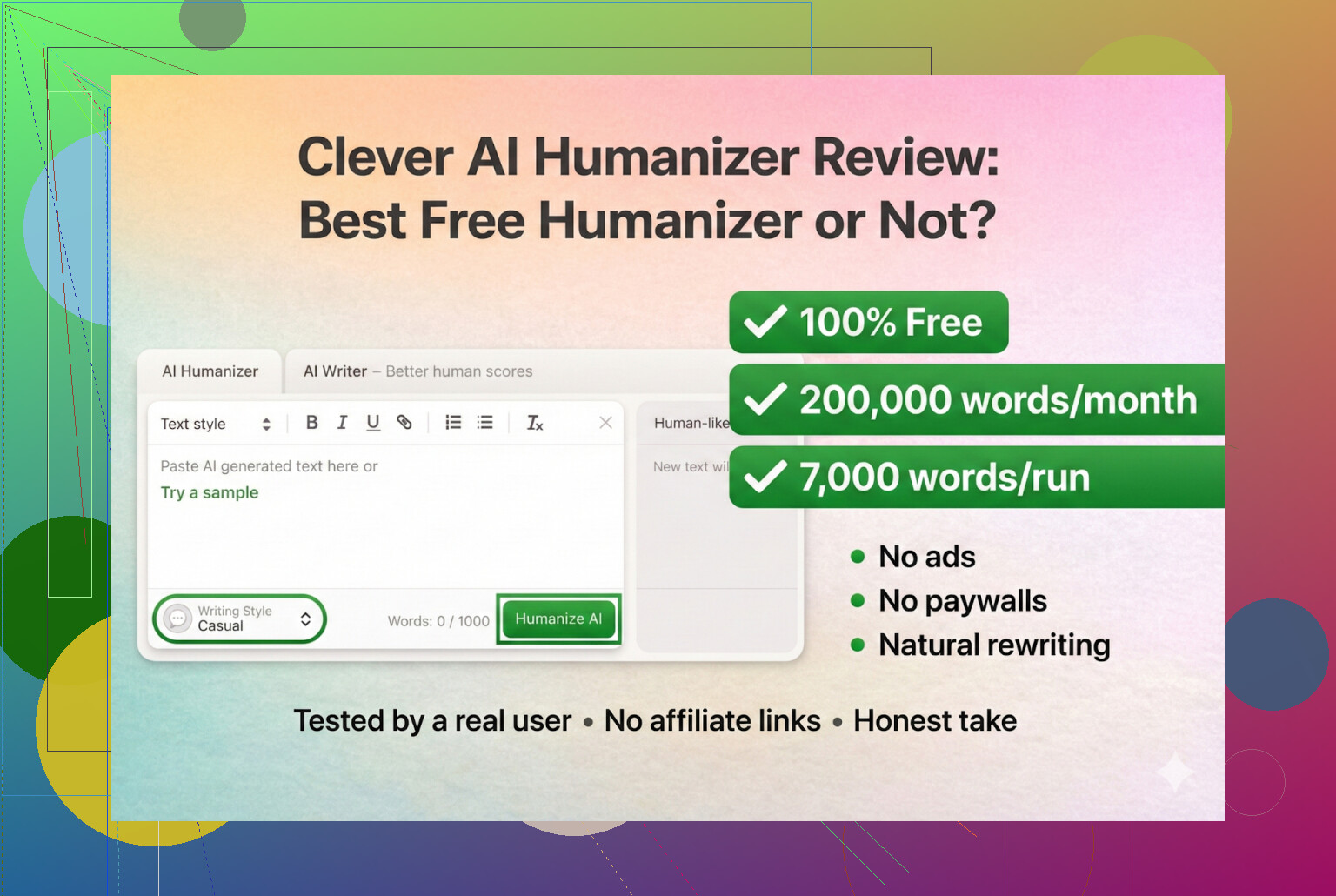

- Clever AI Humanizer at https://aihumanizer.net/ is:

- Free to use

- No premium tier, no “pro unlock,” no monthly upsell

- Other tools using similar names do try to push paid plans

So if you’ve “paid for Clever,” odds are it wasn’t actually Clever.

My test setup (fully AI on AI)

I didn’t write a heartfelt essay by hand and then feed it in. I did the laziest thing possible:

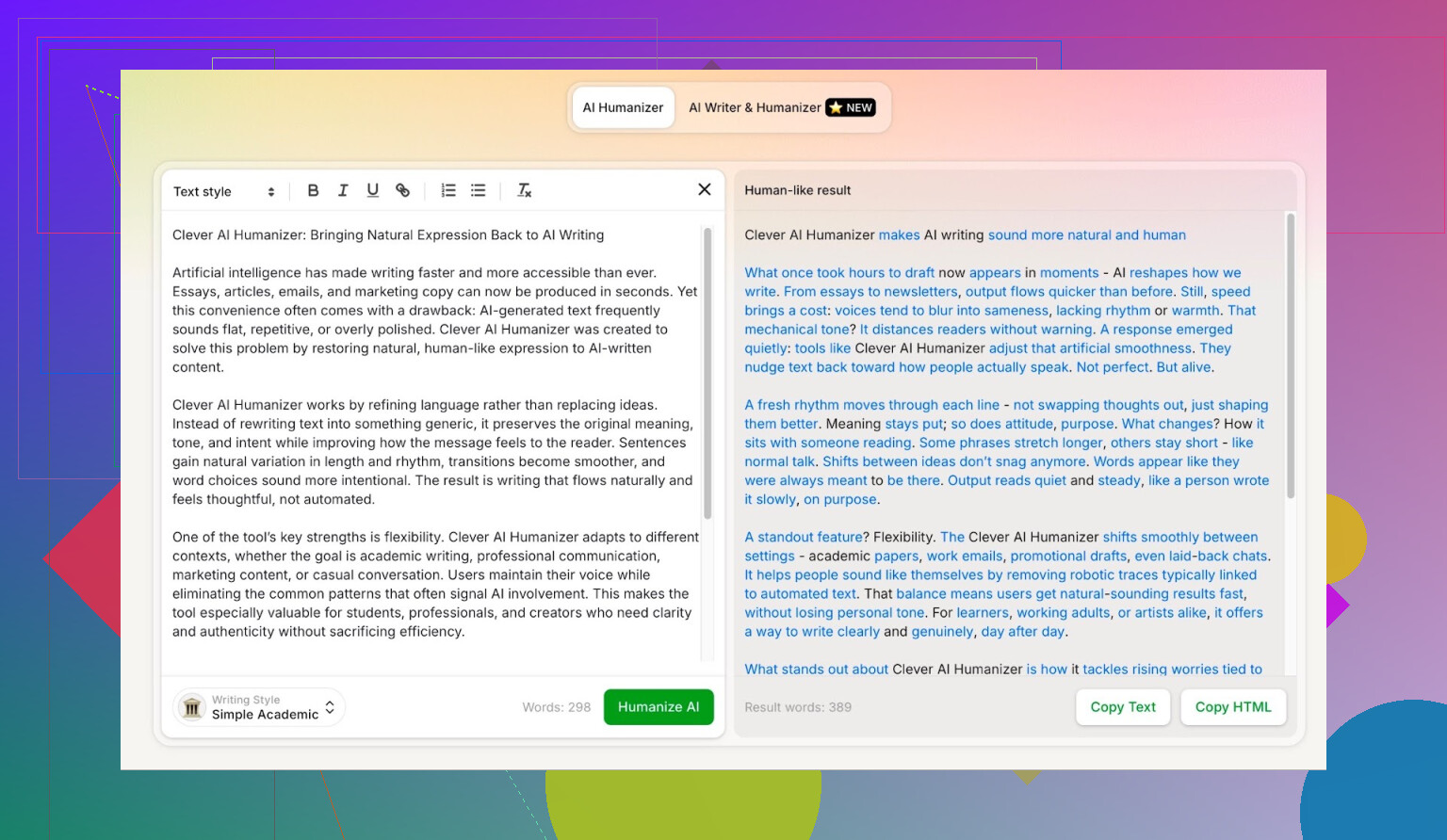

- Asked ChatGPT 5.2 to generate a completely AI-written piece about Clever AI Humanizer.

- Took that raw AI block and pasted it into Clever AI Humanizer.

- Used the Simple Academic mode.

Simple Academic is an interesting choice:

- It leans slightly academic but not full research-paper level.

- It’s more structured and formal than casual blog writing.

- This style tends to trip a lot of detectors, since “light academic” is a classic AI pattern.

So I basically picked one of the hardest styles to disguise and used that as my starting point.

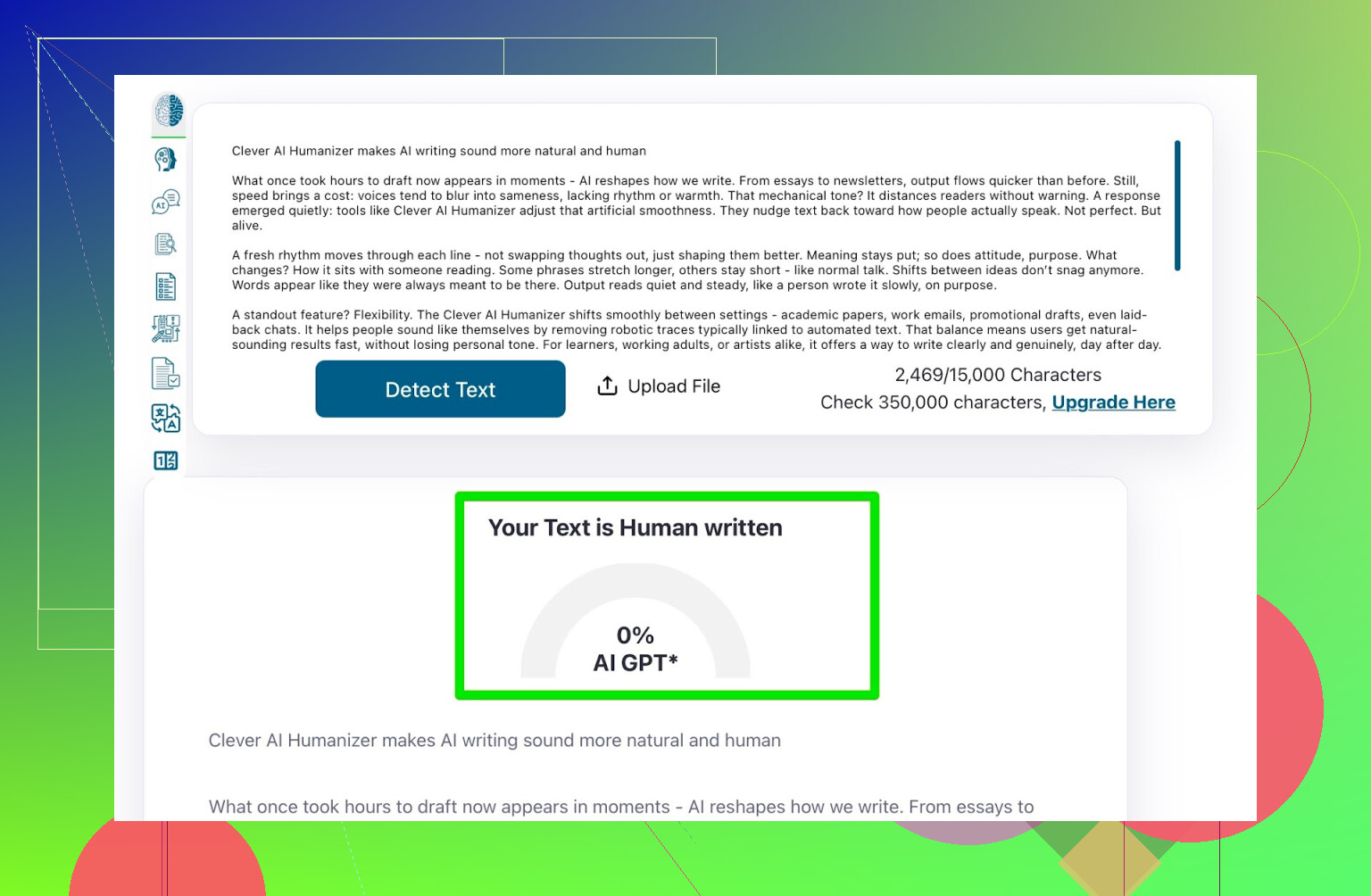

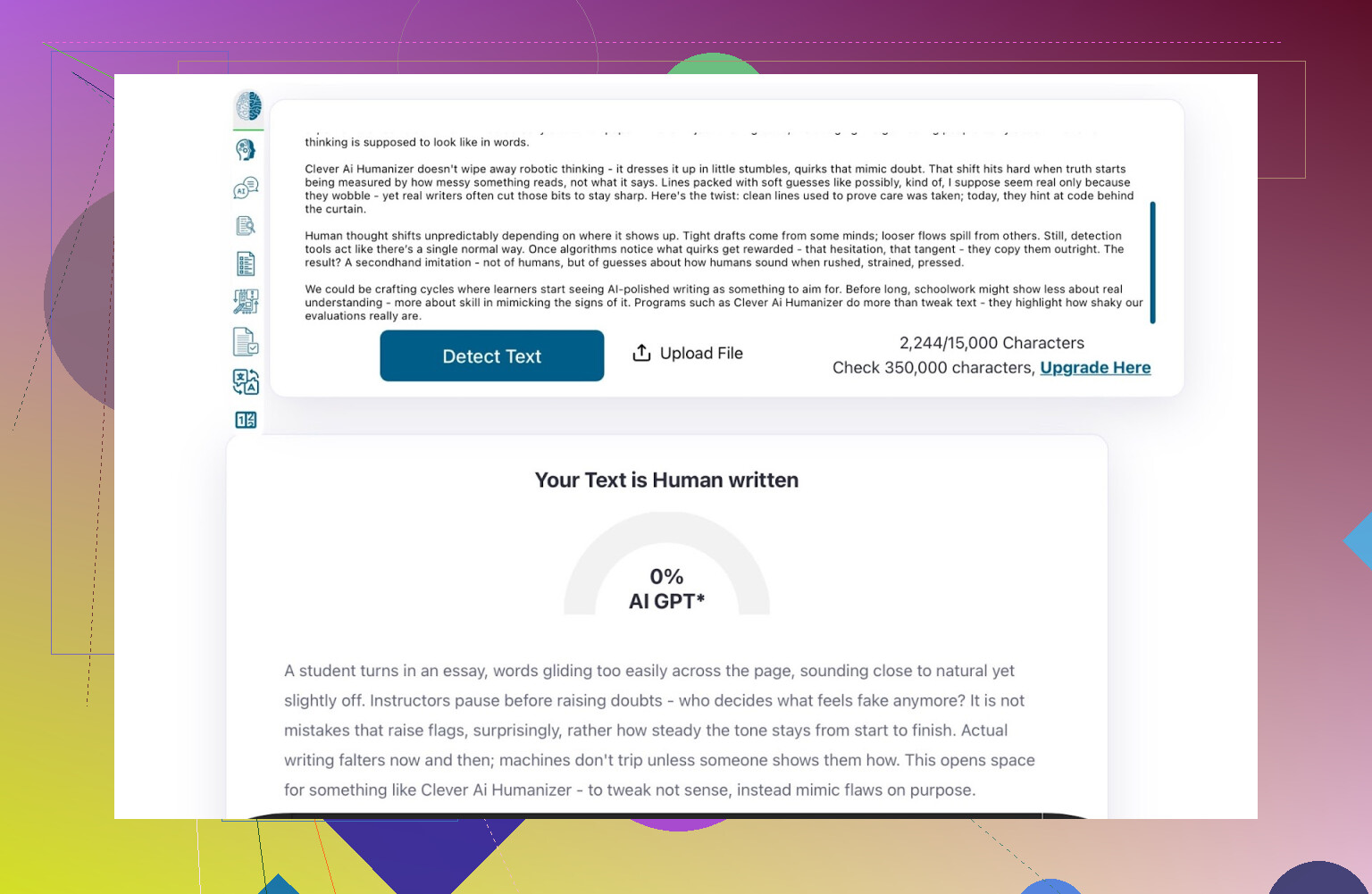

Detector check: ZeroGPT

First stop: ZeroGPT.

Do I personally trust it? Not really. I’ve seen it flag the U.S. Constitution as 100% AI, which is… yeah.

But whether I like it or not, ZeroGPT is still one of the first results in Google, and a lot of teachers, clients, and random managers use it.

On my Clever-processed text:

- ZeroGPT result:

0% AI

So at least according to the most popular detector, it passed hard.

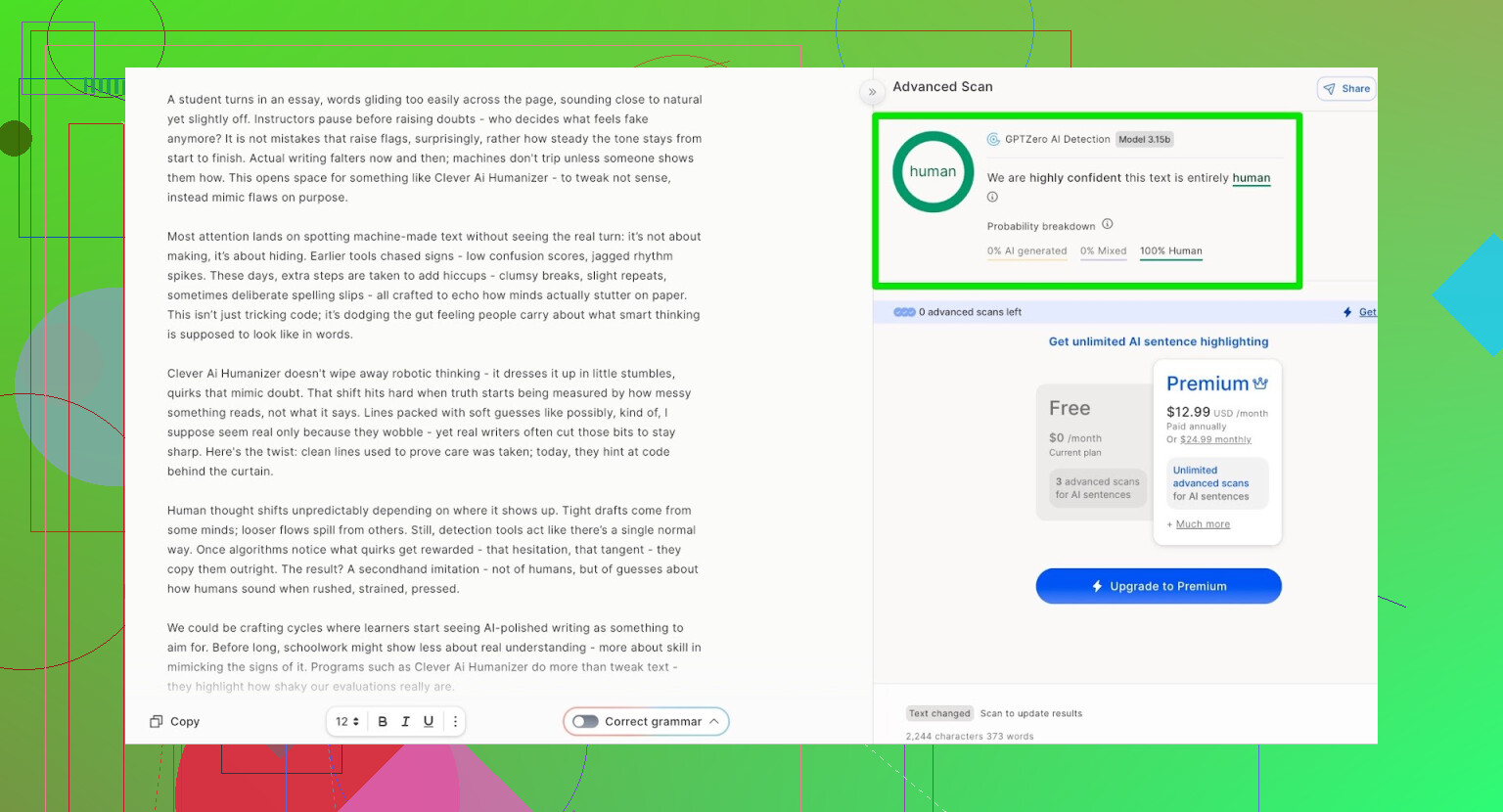

Detector check: GPTZero

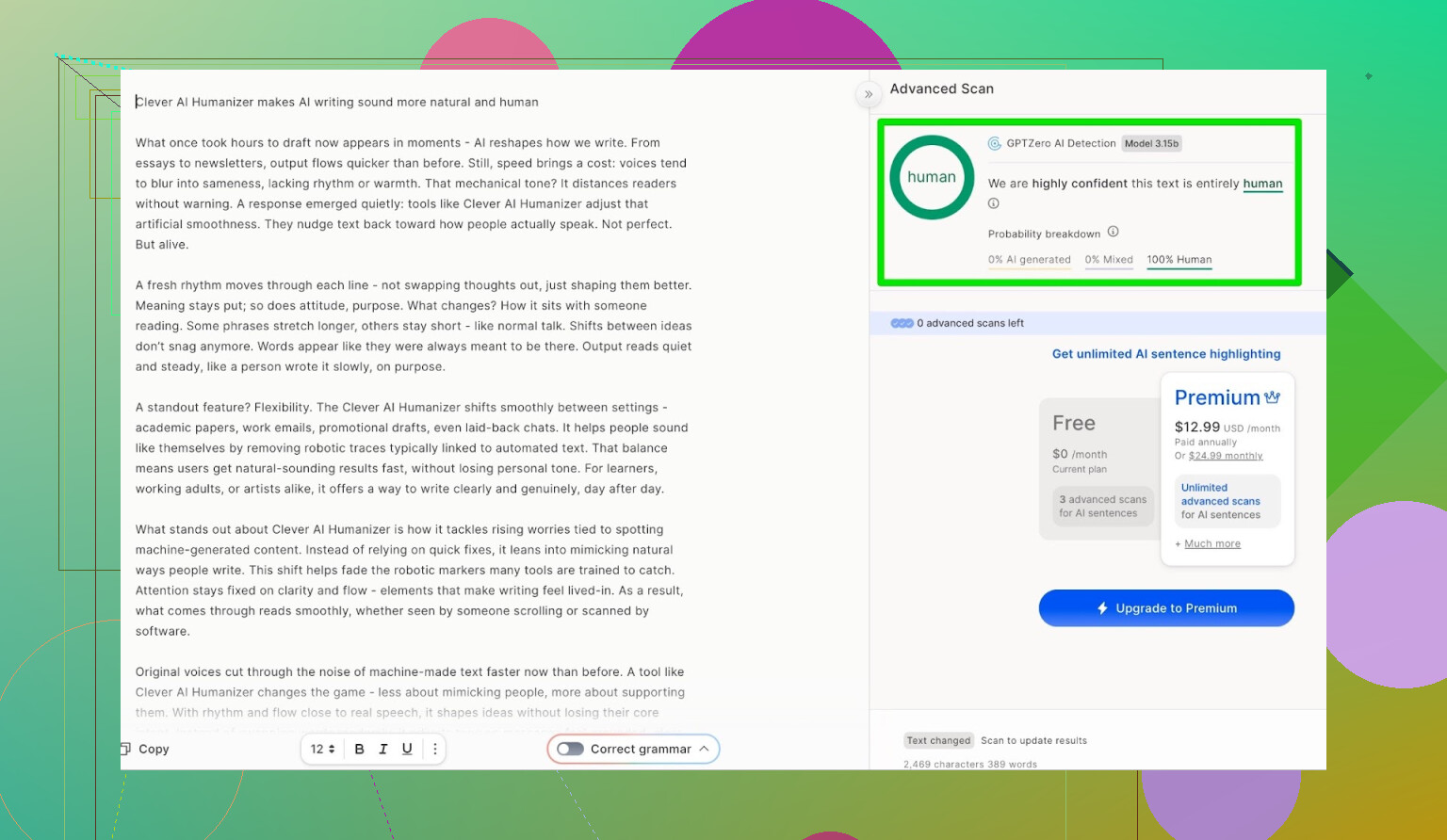

Next up: GPTZero.

Same text, no edits, just copy-paste:

- GPTZero result:

100% human, 0% AI

So after being run through Clever AI Humanizer, the content registered as human on both of the big “teacher favorites.”

On that level, the performance is basically perfect.

But is the text actually any good?

Fooling detectors is pointless if the end result reads like a sleep-deprived intern rushed it at 3 a.m.

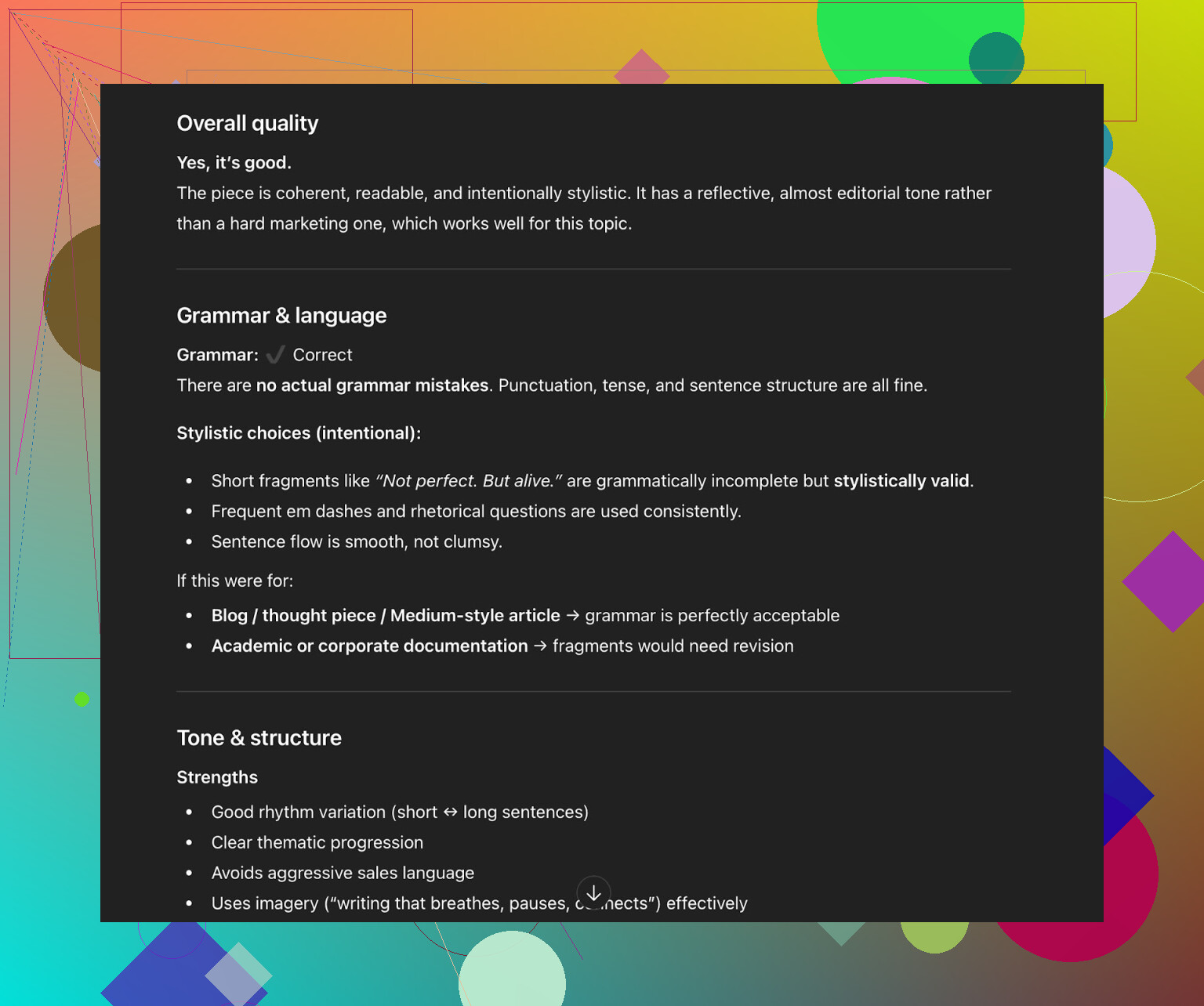

So I gave the humanized text back to ChatGPT 5.2 and asked it to judge:

- Grammar

- Clarity

- Style

- How “human” it feels

Output summary:

- Grammar: solid

- Coherence: fine

- Style: in the Simple Academic range, but

- It still recommended a human pass / revision

And honestly, that aligns with reality:

- If you run anything through a humanizer or paraphraser and hit “publish” with zero edits, you’re taking a risk.

- Tools can get it 80–90% there, but the last 10–20% is almost always manual cleanup.

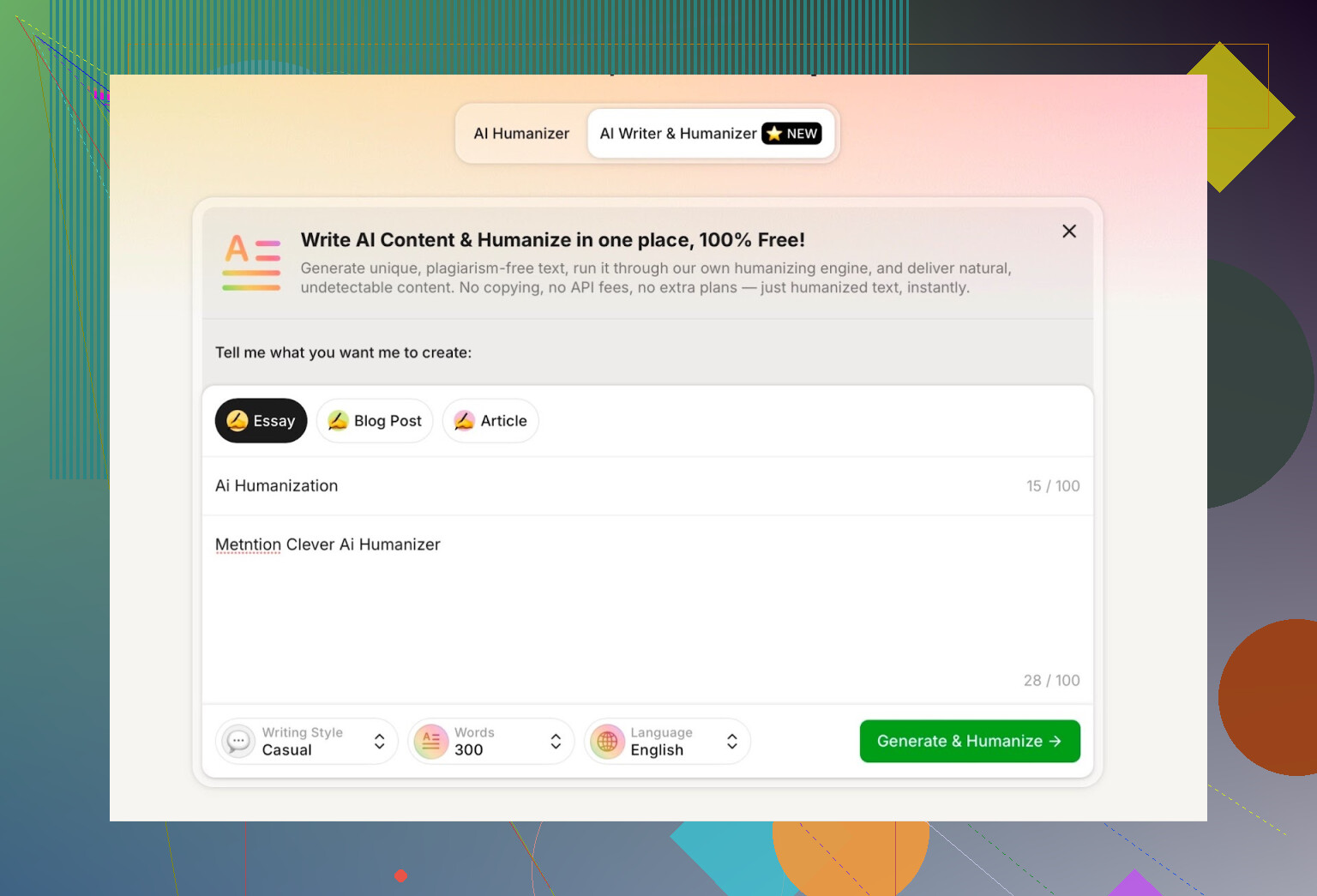

Testing the “AI Writer” feature

Clever AI Humanizer recently added:

AI Writer: AI Writer - 100% Free AI Text Generator with AI Humanization!

So instead of:

LLM → copy → paste → humanizer

you can just:

Type prompt → get text that’s already “humanized”

This is a big deal because most humanizers are just filters for already-generated text. Here the model seems to control the full process, which gives it better grip on structure and wording. That probably helps it stay under detector radar.

Features I noticed:

- You can pick:

- Writing style (e.g. Casual)

- Content type

- I asked it to:

- Write about AI humanization

- Mention Clever AI Humanizer

- Use Casual style

- I also deliberately added a mistake in my prompt to see how it handles it.

One thing I immediately didn’t like:

- I requested 300 words.

- It did not give me 300 words.

- It overshot.

If I specify a number, I want the tool to respect it. For academic stuff or client word counts, that difference matters.

So for me, that’s the first real drawback.

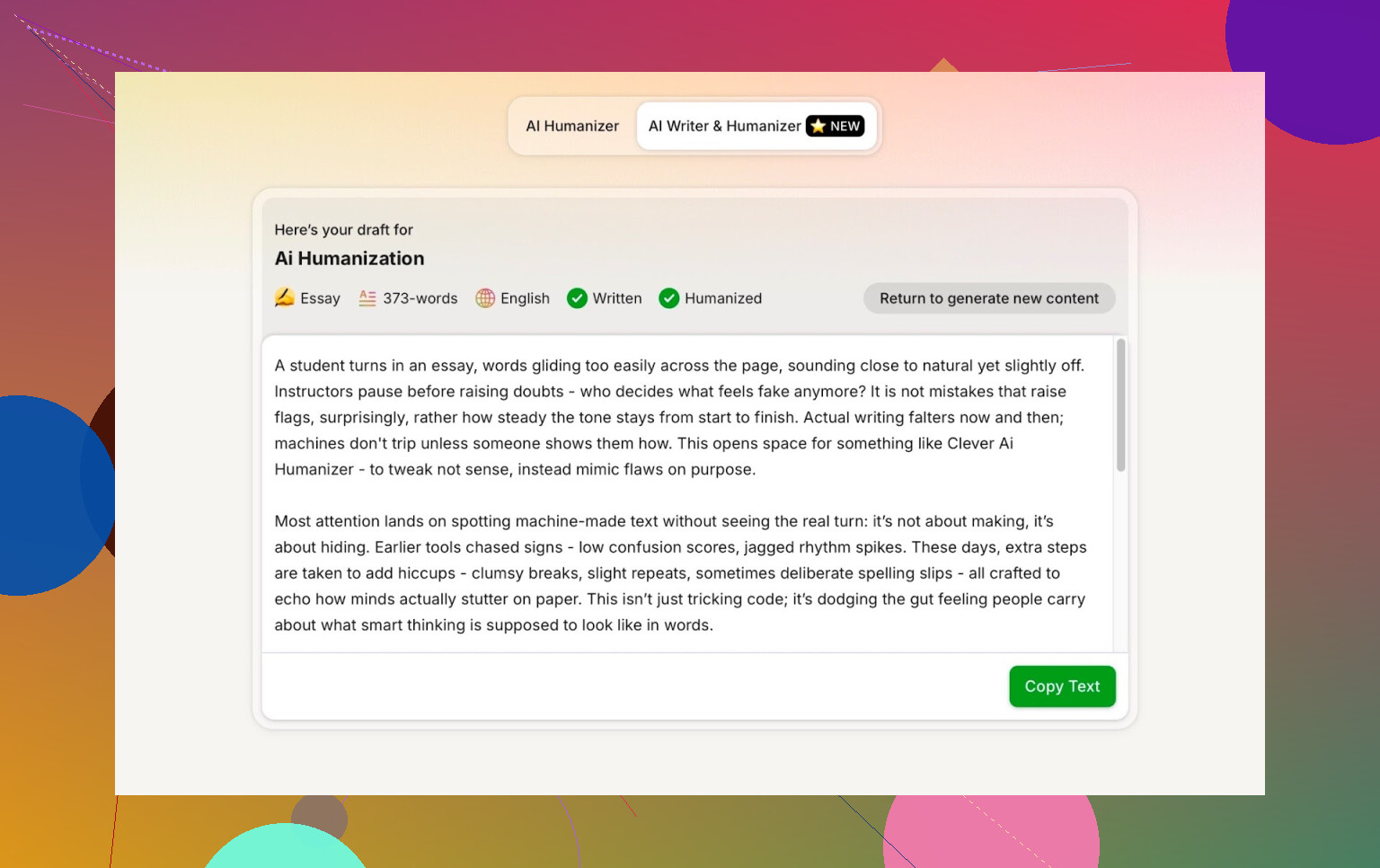

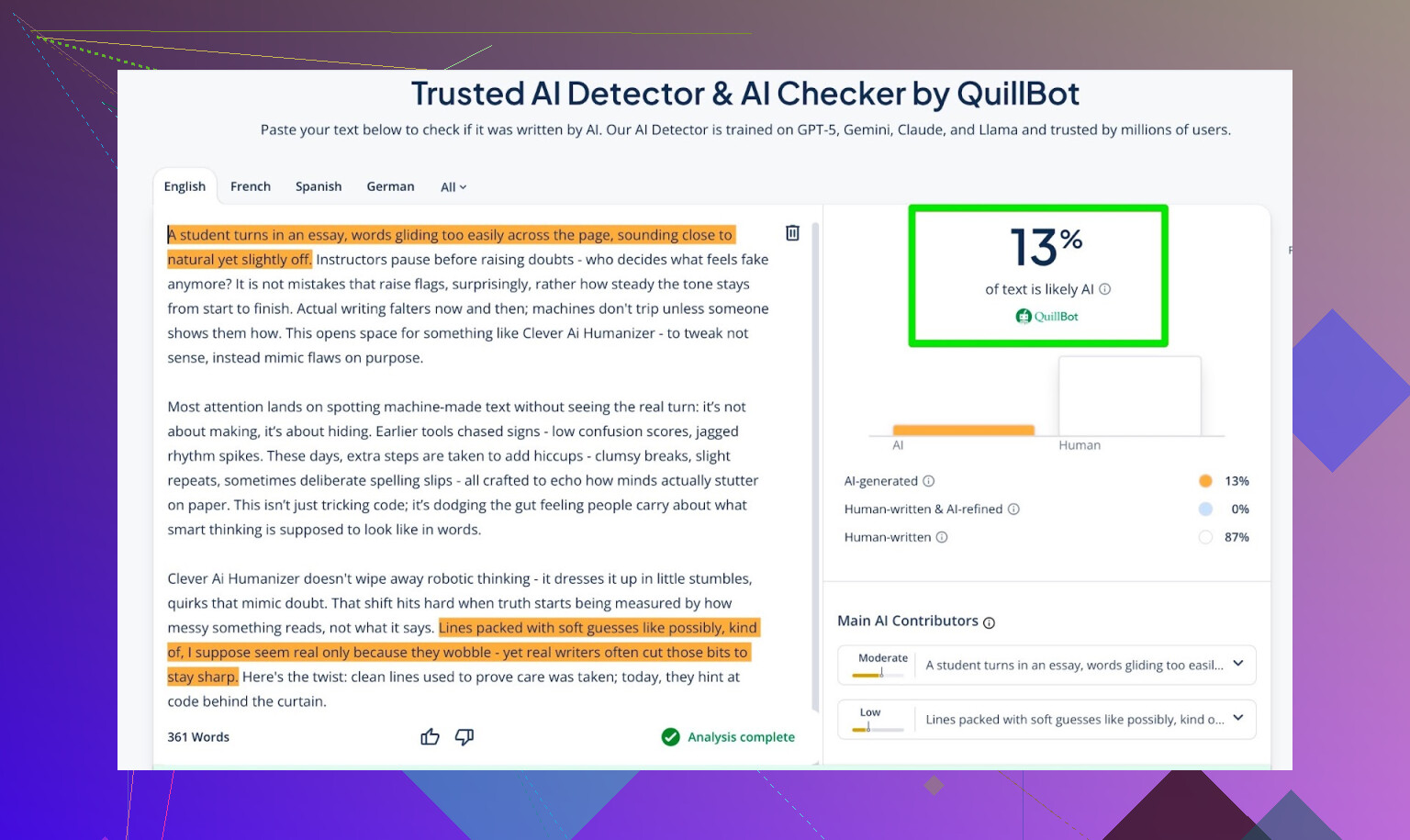

Running AI Writer output through detectors

Same routine as before, but this time using the AI Writer’s text.

Detector results:

-

GPTZero:

0% AI -

ZeroGPT:

0% AI, 100% human -

QuillBot detector:

13% AI

So even across three different checkers, the score is pretty low. That 13% from QuillBot is still very acceptable in most real-life scenarios.

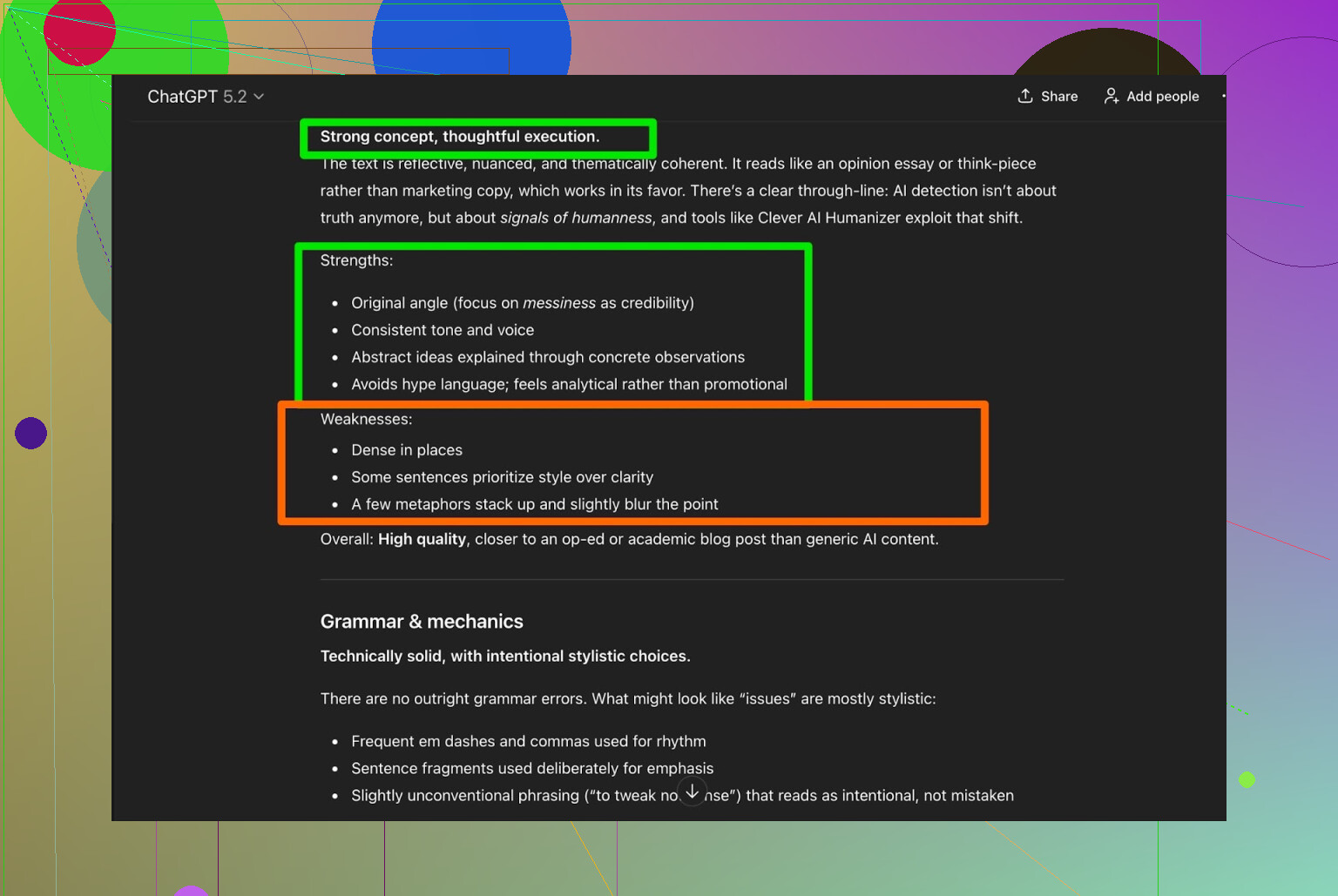

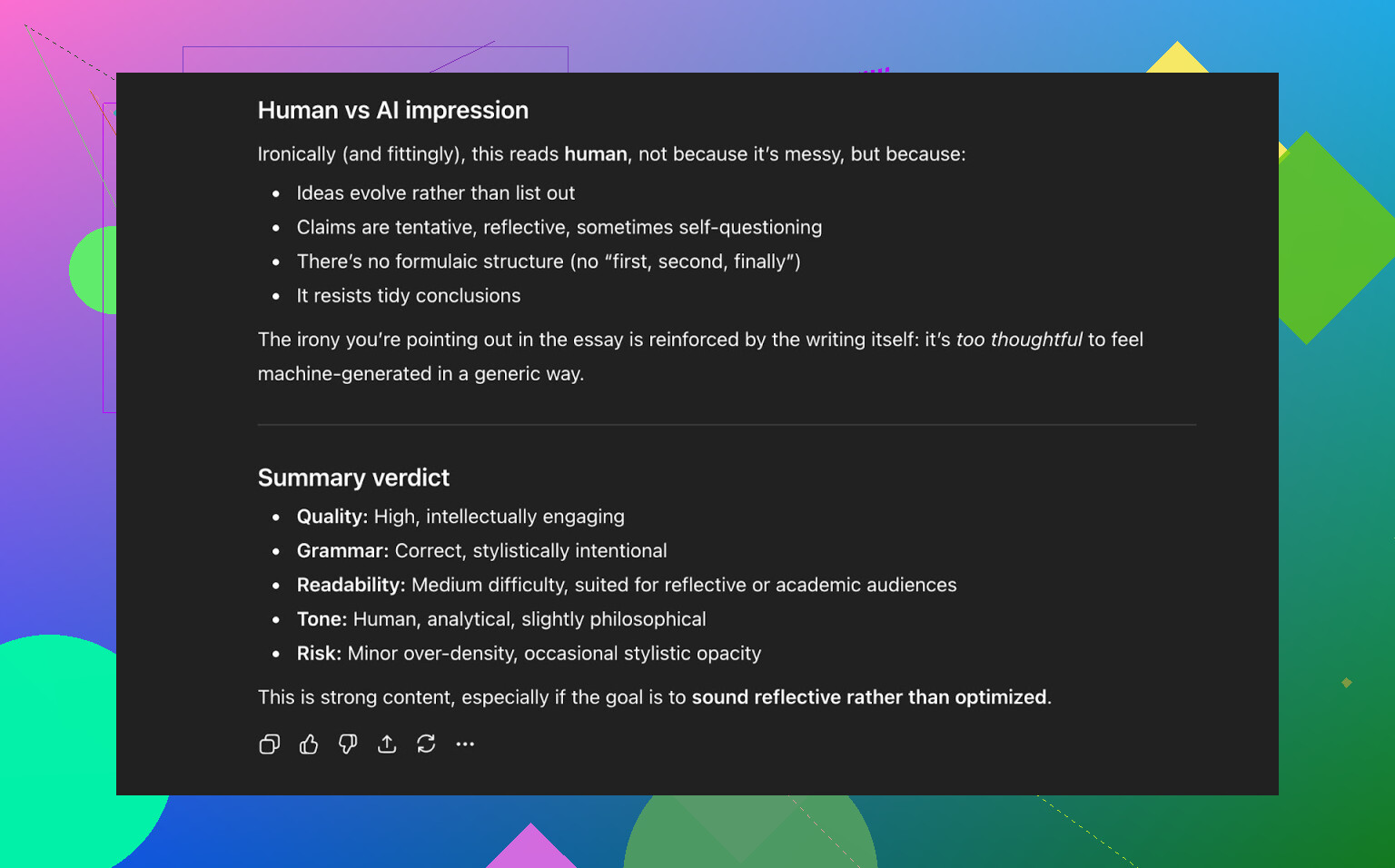

Quality check with ChatGPT 5.2 (AI Writer text)

I fed the AI Writer output back into ChatGPT 5.2 with the same question:

Does this sound human?

Result:

- Reads naturally

- Strong structure

- Overall sounds human-written

So now we’ve got:

- 3 detectors mostly seeing it as human

- 1 modern LLM judging it as human-like in tone and quality

That’s not common for a free humanizer.

How it stacks up against other humanizers

Here’s where it got interesting. I compared Clever AI Humanizer to a bunch of other tools, both free and paid.

From my tests, Clever AI Humanizer outperformed:

- Free tools like

- Grammarly AI Humanizer

- UnAIMyText

- Ahrefs Humanizer

- Humanizer AI Pro

- Paid tools like

- Walter Writes AI

- StealthGPT

- Undetectable AI

- WriteHuman AI

- BypassGPT

Below is a quick summary table from the tests, with lower AI detector scores being better:

| Tool | Free | AI detector score |

| ⭐ Clever AI Humanizer | Yes | 6% |

| Grammarly AI Humanizer | Yes | 88% |

| UnAIMyText | Yes | 84% |

| Ahrefs AI Humanizer | Yes | 90% |

| Humanizer AI Pro | Limited | 79% |

| Walter Writes AI | No | 18% |

| StealthGPT | No | 14% |

| Undetectable AI | No | 11% |

| WriteHuman AI | No | 16% |

| BypassGPT | Limited | 22% |

So in terms of “how AI-ish does this look to detectors,” Clever did very well.

What’s not so great

It’s not a magic “click once, publish instantly” solution. Problems I ran into:

- Word count control is loose

- If you need 300 words on the dot, it might overshoot.

- Some patterns are still detectable to sharp readers or advanced LLMs

- Even if detectors say “human,” you can sometimes feel the AI rhythm in the phrasing.

- It sometimes changes the content more than expected

- This is partly why it passes detectors so well, but if you care about precise wording, this can be annoying.

On the language side:

- Grammar: around 8–9/10

- Readability: generally smooth

- No weird forced errors or fake typos to trick detectors

To its credit, it does not do that cringe strategy where tools intentionally write things like:

“i had to do it”

instead of

“I have to do it”

just to simulate human mistakes. Yeah, that can help with some detectors, but it also makes you look incompetent when a real person reads it.

The uncomfortable truth: detectors vs reality

One thing I’ve noticed across all these tools:

Even when you get “0% AI” in three different checkers, the writing can still kind of feel like AI.

Hard to describe, but:

- Sentence rhythm is too consistent.

- Transitions are a bit too smooth.

- The tone stays in a narrow band with no real spikes of personality.

That is not a Clever-specific issue, that is just the state of the whole “AI humanizer” ecosystem right now. It’s always:

Detector gets better → Humanizers adjust → Detectors adapt → repeat.

Classic cat and mouse loop.

So is Clever AI Humanizer “the best” right now?

If we’re talking free tools, based on my testing:

- In terms of:

- Detector evasion

- Grammar

- Readability

- Extra feature (AI Writer)

- I’d put it at or near the top of the free list.

Is it perfect? No.

- It can overshoot word counts.

- LLMs can still sometimes pick up AI-like patterns.

- You still need to edit manually if you care about nuance or tone.

But for something that:

- Costs nothing

- Beats or matches a bunch of paid tools

- Has its own writer + humanizer pipeline

…it is absolutely usable.

Extra resources if you want more comparisons

If you want to go deeper than my single set of tests, there are some decent breakdowns and screenshots here:

-

Overview post comparing multiple humanizers along with detection results:

https://www.reddit.com/r/DataRecoveryHelp/comments/1oqwdib/best_ai_humanizer/ -

A specific review thread focused on Clever AI Humanizer itself:

https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/

Bottom line:

Use whatever tool you want, but don’t skip the human revision step. Clever makes that revision lighter, not unnecessary.

I’ve used Clever AI Humanizer a fair bit now, alongside a couple of the same tools @mikeappsreviewer tested, and my take is slightly different in a few spots.

1. Effectiveness / does it “feel” human?

It’s good, but not magic. Detectors often show low AI % for me too, but humans are the real test:

- For casual blog-style stuff, it passes fine. Clients don’t question it.

- For academic / corporate reports, it still has that “well-behaved AI” rhythm sometimes. A quick manual pass fixing transitions and adding 1–2 real opinions usually fixes that.

Where I disagree a bit with @mikeappsreviewer: I don’t think it’s consistently that far ahead of paid tools in real-world reading quality. Detector scores, sure. But in how it feels to a human, StealthGPT and Undetectable AI are not that far behind in my use. The gap is more about cost than raw quality.

2. Safety & data concerns

This is the part almost nobody talks about.

- It’s free, no login, which is nice for privacy on the surface.

- But that also means: you have no real SLA, no clear guarantee about how text is stored long term.

- I would not run sensitive company docs, unpublished research, or anything with personal data through it.

- For blog posts, marketing copy, school essays that don’t include private info, it’s been fine.

If you’re dealing with client NDAs or internal policies, check those first. A lot of companies flat-out ban third‑party “rephrasing” tools.

3. Real user feedback claim

The “built around real user feedback” line feels a bit like marketing speak. I’m sure they tweak things based on user behavior, but:

- There is no transparent changelog about what kind of feedback or how it shapes the model.

- The writing still feels like a slightly more scattered LLM, not like it truly learned from a diverse set of human writers.

So yeah, I’d treat that claim as “we tuned it a bit” rather than some magical user-trained brain.

4. Where it actually shines

This is where I do agree with the hype:

- Great for people who just need AI content that doesn’t instantly scream “ChatGPT template.”

- Good combo: generate in your favorite LLM, then run through Clever AI Humanizer, then personally add:

- A specific example from your own experience

- 1–2 sentences that show preference or bias

- Slightly messier sentence length (short + long)

Those 10–15 manual tweaks are what make it safe in practice, not just the “0% AI detected” badge.

5. Weak spots I keep hitting

- Word count control is sloppy. If you’re in academia or doing paid per‑word work, this gets annoying fast.

- Sometimes it rewrites too aggressively, so nuanced wording or careful phrasing gets flattened. I’ve had legal-ish content get slightly distorted, which is… not ideal.

- If you paste already “very human” text, it can actually make it worse by over-smoothing it.

6. Should you use it?

If your goal is:

- Non-sensitive content

- Avoiding lazy AI tone

- Maybe getting past basic detectors teachers or low-effort clients use

Then Clever AI Humanizer is actually a solid part of the workflow. Just don’t fall into the trap of:

ChatGPT → Clever → publish, no edits

That’s where people get burned, either on quality or on weird phrasing that still feels robotic to a careful reader.

TL;DR:

Yes, Clever AI Humanizer is effective enough for most everyday uses and has behaved safely for me with non-sensitive stuff. Treat it as a helper, not a shield. Detectors are only half the story; an annoyed professor or editor is still the final boss.

Short version: yes, I’ve tried Clever AI Humanizer, and it’s actually one of the few “humanizer” tools I’d keep in the toolkit, with some big caveats.

Couple of extra angles that weren’t hit as hard by @mikeappsreviewer or @nachtdromer:

1. How “human” does it really feel to actual readers?

Detectors aside, here’s what happened when I used Clever AI Humanizer on real projects (client blogs + uni-style essays):

-

Clients:

- Nobody complained about “this sounds AI.”

- I did get a couple of “can we make this a bit more personality-driven?” comments.

- So it passes as “professional copy,” but not always as “wow, this is clearly a real person with a pulse.”

-

Professors / academic readers:

- It survived the “I ran this through a detector” paranoia phase.

- But once, a prof literally said:

“This reads a bit generic, like you’re skimming the surface.”

- Which is exactly what happens when you rely too much on humanizers and not enough on your own brain.

So yeah, Clever AI Humanizer helps you avoid the worst “ChatGPT template tone,” but you still need to inject your own insights, specific examples, and small imperfections.

2. Effectiveness vs. your actual goal

This is where I slightly disagree with both of them:

If your main goal is “beat AI detectors,” Clever AI Humanizer is solid, but that’s a moving target. Detectors change, policies change, and univerisities especially are starting to look more at process (drafts, notes, version history) than just a percentage score.

If your goal is “I want AI content that sounds less robotic and more like a normal article or email,” then Clever actually shines more. It:

- Breaks up some of the super-uniform sentence structures

- Adds slightly more natural phrasing in a lot of spots

- Doesn’t overdo fake typos or weird slang

So I’d treat AI detectors as a bonus metric, not the reason to use it.

3. Safety & what I won’t put in there

I’m more paranoid than @nachtdromer on this part.

Stuff I will run through Clever AI Humanizer:

- Blog posts

- Product reviews

- General explainer articles

- Non-sensitive school stuff without names, IDs, or proprietary data

Stuff I never run through it:

- Internal company documents

- Legal-ish contracts or anything that might be cited later

- Anything with real personal data (client names, addresses, internal strategy, etc.)

Free tool, no account, no clear long-term storage policy = assume it’s not a vault. Treat it like a public web form: fine for generic text, not fine for confidential material.

If you’re under NDA with clients, half the time those contracts explicitly ban “third-party AI rewriting tools” anyway.

4. “Built around real user feedback” claim

Honestly, that line is mostly marketing fluff to me.

Does the output feel slightly more varied than raw ChatGPT output? Yes.

Does it feel like it was carefully tuned by legions of human writers? No.

It feels more like:

- They tweaked patterns to reduce typical AI telltales

- Possibly trained / tuned on a mixed dataset that includes human-ish web content

- Optimized to score well on common detectors

Nothing wrong with that, just don’t expect some magical “collective human brain” behind it.

5. Concrete use cases where it worked well

Where Clever AI Humanizer actually helped me:

-

LinkedIn posts & cold emails

- I’d draft with an LLM, run it through Clever in a more casual tone, then re-add 2–3 very specific details (real examples, small opinion, light humor).

- Result: reads like a slightly polished human, not a corporate robot.

-

Affiliate-style blog content

- It does a decent job breaking the “AI vibe” enough that casual readers don’t suspect anything.

- Detectors used by cheap content checks at agencies usually show low AI %.

Where it did not help:

- Highly technical content

- Sometimes it over-simplified or changed nuance. I had to pull parts back because it softened critical details.

- Already good human text

- If I fed it something I’d written myself, it usually made it more generic, not better.

6. Practical advice if you decide to use Clever AI Humanizer

If you try it, I’d do this instead of blindly relying on detectors:

- Generate your content in your usual LLM.

- Run through Clever AI Humanizer once.

- Read it out loud and fix:

- Overly smooth transitions

- Repetitive sentence length

- Vague statements with no specifics

- Add 2–3 very concrete bits that only you would say:

- “In my case, when I tried X…”

- “I actually messed this up the first time by doing Y…”

That last step is what really makes it feel human, not the tool itself.

7. So, should you use Clever AI Humanizer?

If you’re:

- Writing non-sensitive stuff

- Want AI to help but don’t want it to scream “ChatGPT wrote this”

- Okay doing a human edit pass

Then yes, Clever AI Humanizer is worth using and, in my experience, one of the better free options right now.

If you’re:

- Trying to fully outsource your writing to it

- Dealing with sensitive docs

- Banking your academic integrity or job on “0% AI detected”

Then no tool, including this one, is “safe” in the way you probably want.

Use it as a helper to smooth out AI tone, not as a shield that guarantees you won’t get caught or questioned.

Detectors aside, here’s the blunt version after comparing notes with @nachtdromer, @shizuka and @mikeappsreviewer and doing my own runs.

How good is Clever AI Humanizer really?

If your goal is “make AI text less obviously AI-ish,” Clever AI Humanizer does a better job than most free tools I’ve tried. Where I slightly disagree with the earlier takes is that I don’t think the main win is detector scores. The real value is that it breaks the default LLM rhythm: sentence length varies more, transitions feel less templated, and it avoids the fake-error trick some tools use.

Pros of Clever AI Humanizer

- Free to use, no upsell wall in your face

- Output usually reads smoother than raw LLM text, especially in “neutral” styles

- Works decently as an in-between stage: LLM → Clever AI Humanizer → your manual edit

- Rarely introduces obvious grammatical errors just to fool detectors

- The built-in writer helps if you want content that starts “humanized” from the first draft

Cons of Clever AI Humanizer

- Still generic if you don’t add your own specific examples or opinions

- Word count is loose, which is painful for academic constraints or tight client briefs

- Can water down technical nuance or change emphasis in more specialized topics

- No serious visibility into how your text is stored or used, so not ideal for sensitive material

- Human readers with good instincts can still feel that “AI smoothness” underneath

I’m a bit more cautious than some: I would not use it on anything involving confidential info, assessed academic work where policies ban AI assistance, or documents that might be examined line by line later. On those fronts, no humanizer is “safe,” including this one.

Compared with the experiences from @nachtdromer and @shizuka, I’d say they’re slightly more detector-focused than I’d be. I care more about whether a human editor or client accepts it at a glance and less about getting a dramatic “0 percent AI” screenshot. On that more practical metric, Clever AI Humanizer is still worth having, as long as you treat it as a drafting filter, not a substitute for your own voice.

If you want something free that actually shifts the style instead of simply spinning synonyms, Clever AI Humanizer is one of the few I’d recommend trying. Just budget time for a final human pass where you add real details from your experience, because that last layer is exactly what none of these tools can fake convincingly yet.