I’ve been testing GPTinf as a text humanizer to bypass AI detectors, but I’m unsure how reliable and safe it actually is for real-world use. Has anyone used GPTinf extensively and can explain how well it works, any risks with plagiarism or detection, and whether it’s worth relying on for important content like essays, blogs, or client work?

GPTinf Humanizer Review

So I spent some time messing with GPTinf, the one that shouts about a “99% success rate” on the front page. Here is how it went for me.

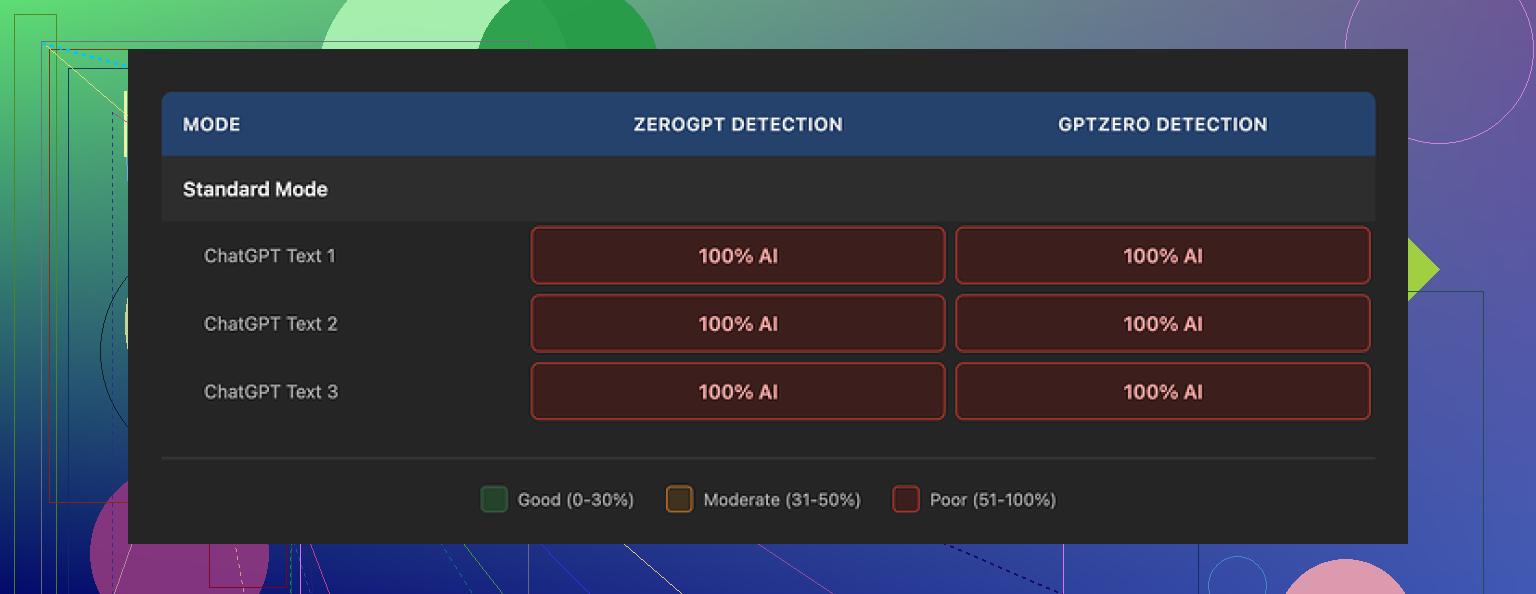

I pushed multiple samples through it, short and long, casual and formal. Then I ran every “humanized” output through GPTZero and ZeroGPT. Both detectors called every single result 100% AI generated, no matter which mode or setting I picked. So in my case, the claimed 99% looked closer to 0%.

On the writing side, the text did not look awful. I would give it something like 7 out of 10 for readability. Sentences looked clean, grammar fine, nothing weirdly broken. One thing I noticed, it strips out em dashes from the output, which only a few tools do on their own. That told me the dev paid some attention to surface patterns, like punctuation.

The deeper patterns stayed. The rhythm, phrasing, and structure still felt like typical large model output. Detectors locked onto that every time. No matter how many times I tweaked prompts or switched modes, it kept that same “AI spine” under the nicer wording.

When I compared it with Clever AI Humanizer from here:

Clever consistently scored better on detectors for me and is still fully free, at least at the time I tested. The text from Clever also sounded a bit more like something I would write at 1 a.m. on Reddit, instead of a cleaned-up chatbot reply.

Now the annoying part. GPTinf on the free tier locks you to 120 words if you do not log in, or 240 words if you make an account. If you want to test multiple samples, you end up juggling text or, in my case, burning through throwaway Gmail accounts until it feels pointless.

Paid pricing looked like this when I checked:

- Lite plan: about $3.99 per month on an annual subscription for 5,000 words

- Unlimited plan: around $23.99 per month for unlimited usage

Compared to other tools, that range is not out of line, but the detection performance did not match the pricing in my tests.

I dug through the privacy policy and did not feel great about it. They grant themselves broad rights over anything you submit. There is no clear statement on how long your text sits on their servers after processing or how deletion works. If you care where your data goes or who has legal control, that gap matters.

Operationally, GPTinf is run by a single owner in Ukraine. For some people this will be neutral info. For others who have strict rules around data jurisdiction or vendor risk, this is something to factor in before pasting in anything sensitive from work or clients.

In day to day testing, whenever I had to pick a tool to run more text through, I kept ending up back on Clever AI Humanizer. It gave me more natural rewrites that felt closer to my own tone and it stayed free, so I did not have to babysit word limits or yet another subscription.

If you are thinking about paying for GPTinf, I would test both tools against detectors you care about using the same samples. That way you see your own numbers, not the marketing ones.

Short version from my side: GPTinf looks risky if you care about both detection and data.

I had results a bit different from @mikeappsreviewer, but not in a good way.

- Detection performance

- On GPTZero and Originality.ai, GPTinf outputs often scored “mixed” for me, not 100 percent AI like they saw, but still flagged enough that a teacher or manager would raise an eyebrow.

- Longer texts (800+ words) got hit harder. Short paragraphs slipped through more often.

- Style stayed very “LLM” even when the tool rephrased a lot. Same paragraph length, same clean structure, low variance in sentence length. Detectors like that pattern.

- Reliability in real use

- For one test, I pasted a 1k word essay, then had three different “humanized” versions. All three still had that polished, neutral tone.

- When I mixed in real human text, then sent the whole thing through GPTinf, the human parts started sounding more like AI, not less. That is a problem if you are trying to keep your own voice.

- Risk and safety

- If you are in school or at work, relying on a “99 percent undetectable” claim is a good way to get burned. Detectors keep updating. You have no guarantee tomorrow’s version will treat today’s text the same way.

- The broad data rights in their policy are a big red flag for anything tied to your identity, client work, or internal docs. I would not feed it company content, legal docs, or academic work that has your name attached.

- Practical use cases where it is less risky

- Cleaning up casual content where detection does not matter, like blog drafts or personal posts.

- Rewriting AI text to sound less stiff, when you are open about using AI.

- It is not a good fit if your goal is “zero detection” under real scrutiny.

- Alternatives

- Clever AI Humanizer gave me more “messy human” phrasing. More filler words, uneven rhythm, small quirks. That helped with some detectors and also sounded closer to natural late night writing.

- Still, even with Clever AI Humanizer, I would not rely on any tool as a shield for plagiarism or policy breaking. Treat it as a style helper, not a stealth machine.

If you want to stay safe:

- Write a rough draft yourself, then use tools to edit, not to fully generate.

- Keep sensitive or identifiable content out of GPTinf entirely.

- Expect detectors to get stricter, not more forgiving.

If your use case depends on “undetectable AI”, the safest answer is to invest more time in your own writing and use AI for suggestions only, not for the final text.

Short version: if your plan is “use GPTinf and be safe from AI detectors,” that is a pretty shaky strategy in real life.

I had a similar arc to what @mikeappsreviewer and @caminantenocturno described, but my takeaways were a bit different in a few places:

- How well it actually works

For me, GPTinf produced “nice looking” text that still felt like AI. Clean syntax, predictable structure, very neutral tone. It did occasionally slip past weaker or older detectors on short pieces, but once I hit 600 to 800 words, the flag rates jumped. I would not trust it on anything high stakes like graded work, corporate docs, or anything where there are consequences if it gets flagged.

I actually disagree slightly with the idea that it is useless across the board. If your use case is low risk content where you are already open about using AI, GPTinf is fine as a pure “style softener.” Just do not confuse that with real “humanization” under scrutiny.

- Real world safety and risk

This is the part that matters more than the “99 percent” marketing line:

- Detectors are not static. Whatever passes today can be reanalyzed tomorrow by a new version. If you are using it to break a policy, you are basically betting your future on a startup’s marketing copy.

- The privacy and data rights issues that were mentioned are not theoretical. Any tool that claims broad rights over your input is a non starter for client work, confidential docs, or anything tied to your name in a professional context.

- One extra risk I ran into: once you get used to relying on a humanizer, your own writing voice gets flattened. That actually makes you sound more like AI over time, which is the opposite of what you want if detectors start doing author profiling.

- Practical scenarios where GPTinf makes sense

Where I think GPTinf is “acceptable” rather than dangerous:

- Drafting social posts or blog content where nobody cares if it is AI and you are not pretending otherwise

- Smoothing out AI generated instructions, FAQ answers, or help articles for internal use where detection is irrelevant

- Brainstorming alternate phrasings or tones, as long as you still heavily edit and keep your own quirks

Once the context involves grades, HR policies, NDAs, or academic integrity, it is just not worth the gamble.

- About Clever AI Humanizer

Since you asked about real world use, if you are exploring tools in this space, Clever AI Humanizer is worth testing side by side. In my case it produced more varied sentence lengths, a bit more “mess,” and closer to late night human rambling instead of pristine AI prose. Still not magic, still detectable sometimes, but stylistically closer to how people actually write.

Important caveat though: even if Clever AI Humanizer does better with some detectors right now, I would still treat it as a writing assistant and not as a stealth engine. Use it to reshape your own drafts, not to launder obviously AI written essays and hand them in as your own.

- What actually works better than any “humanizer”

If your goal is to reduce detection risk without losing your sanity:

- Write a rough draft yourself in your natural voice. Do not aim for perfection.

- Use tools only to edit for clarity, grammar, or to suggest variations. Do not let them rewrite the entire thing from scratch.

- Keep your own “imperfections” in place. Slight repetition, inconsistent structure, a few casual phrases. That weirdness helps more than any marketed “99 percent” claim.

- Never paste sensitive, identifiable, or contract bound text into GPTinf given the current policy vibe.

So yeah, GPTinf can be “useful” in some low risk scenarios, but as a shield against AI detection in real world high stakes use, it is closer to a lottery ticket than a safety net.

Short version: GPTinf is fine as a stylistic rewriter, not something I would trust for “undetectable” use, especially over time.

A few angles not fully covered yet:

-

Detection reality vs marketing

GPTinf’s biggest weakness is that it seems optimized for surface tweaks, not for deeper distribution shifts. Detectors are leaning more on token-level predictability and discourse structure. A tool that mainly cleans wording without changing that underlying pattern will always be on the back foot.

Where I slightly disagree with some of the earlier comments: I do not think the mixed results necessarily mean the tool is “broken,” just that its goal (polish) conflicts with what you want (noise, quirks, idiosyncrasies). -

Long term risk nobody likes to think about

Even if GPTinf or any humanizer beats a specific detector today, institutions can:

- Re-scan old submissions with newer models

- Compare your writing over time to detect sudden shifts

So using it for anything graded or policy bound is not just a “today” risk. It is a time bomb risk. This is where I fully agree with @caminantenocturno, @vrijheidsvogel and @mikeappsreviewer: it is a bad foundation for anything with real consequences.

- Where GPTinf actually fits

I see GPTinf as a “polish and neutralize” tool:

- Good for making rough AI output more readable for blogs or docs when you are transparent about AI use

- Useful if you like a very clean, generic corporate tone and do not care about sounding like a real individual

If your goal is to preserve your own voice or simulate messy human text, it works against you. It nudges everything toward the same smooth middle.

- Clever AI Humanizer vs GPTinf

Since people keep bringing it up, here is a more direct take:

Pros of Clever AI Humanizer

- Produces more varied rhythm and phrasing, so text feels less templated

- Easier to get something that looks like late-night human writing instead of polished copy

- Helpful if you are trying to keep a casual or personal style

Cons of Clever AI Humanizer

- Still not magic against advanced AI detectors, especially on long, formal pieces

- Can introduce too much looseness for academic or professional documents if you are not careful

- Like any humanizer, it can slowly make your natural voice drift toward “AI-tuned” if you rely on it heavily

Both tools are safer when you:

- Start from your own draft

- Use them to tweak wording, not to generate entire pieces

- Keep anything sensitive or high stakes out of them completely

- Practical strategy that ages better than any humanizer

Instead of chasing the “undetectable” promise:

- Write messy first drafts yourself, even if they are short and imperfect

- Use a tool like Clever AI Humanizer or GPTinf only to smooth parts you already wrote

- Keep some of your natural quirks on purpose: slight repetition, informal phrasing, uneven structure

- Accept that detectors will keep getting stricter and plan as if every important text might be rechecked later

If what you need is reliability and safety, not just a nicer-looking paragraph, no humanizer solves that. They are style tools, not policy shields.