I’m trying to figure out how to properly set up and optimize a GPTHuman AI Review workflow, but I’m not sure if I’m doing it correctly or missing important steps. I need help understanding best practices, common mistakes to avoid, and how others are successfully using GPTHuman AI Review so I can improve the accuracy and reliability of my reviews.

GPTHuman AI review, from someone who spent too long testing it

GPTHuman’s headline is “The only AI Humanizer that bypasses all premium AI detectors.” I went in curious, not optimistic. I ran it through my usual test routine anyway.

Here is what happened.

You can see the original writeup and screenshots here:

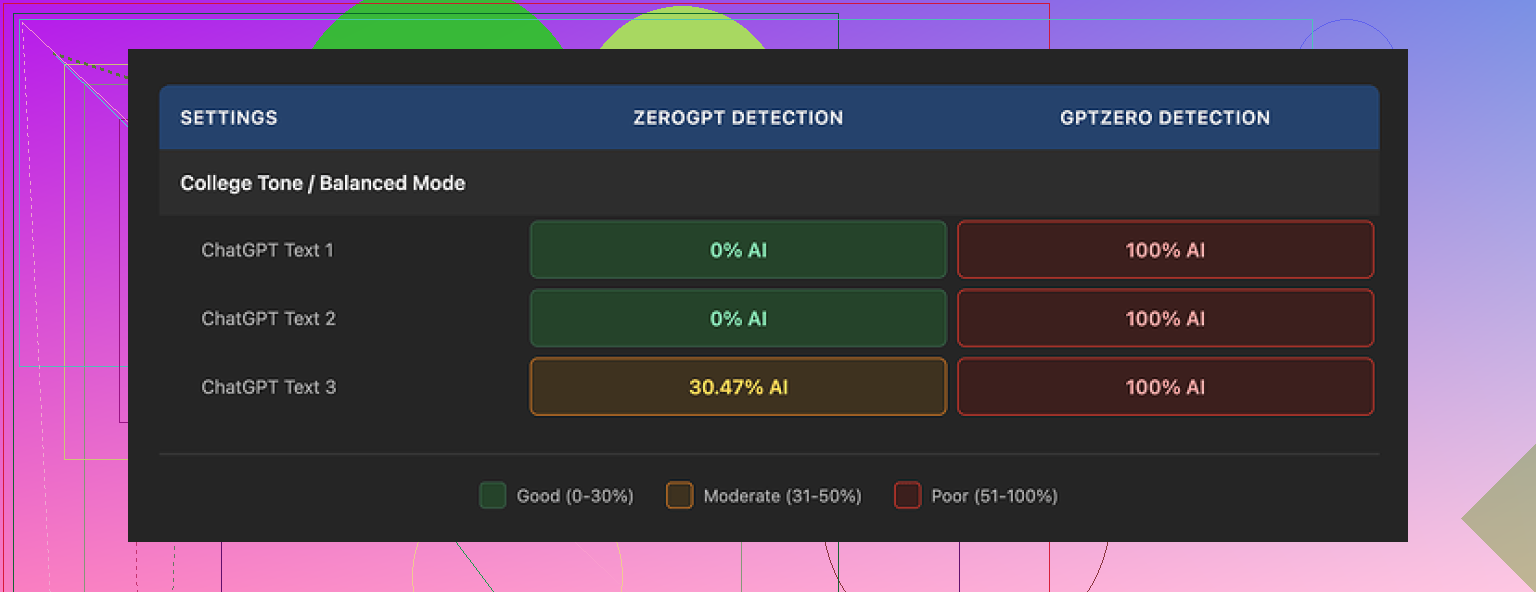

How it did against AI detectors

I took three different AI written samples, ran each through GPTHuman, then sent the outputs to multiple detectors.

Tools used:

- GPTZero

- ZeroGPT

Results:

GPTZero

- All three GPTHuman outputs flagged as 100% AI.

- No borderline scores, no “mixed” result, just full AI every time.

ZeroGPT

- Two samples came back as 0% AI.

- The third one got flagged at around 30% AI.

So on one detector it failed completely. On the other it passed twice and got clipped once. That is not “bypasses all premium AI detectors.”

Inside GPTHuman there is a “human score” meter that shows how “natural” the text is supposed to be. During my runs, those internal scores looked good, but they did not match what external tools reported. So if you rely on the built in score, you get a false sense of safety.

Text quality and grammar

Readability on the surface looks fine. Paragraphs come out in neat blocks, no weird spacing, nothing visually broken.

Then you read it slowly.

Patterns I kept seeing:

- Subject verb issues

- Example feel: “The users is expected to” type mistakes.

- Sentences that trail off or never land

- Word swaps that do not fit the sentence

- You see a word that belongs in a different context jammed into a normal phrase.

- Closings that stop making sense near the end of the text

One of my outputs started strong for two paragraphs, then the last few lines felt like a drunk autocorrect. You could guess what it wanted to say, but you would not send it to a client without rewriting the whole last section.

If you are trying to pass as human to an editor, teacher, or hiring manager, these glitches stick out. It reads like someone who half knows English, or like rushed AI content with light edits.

Limits, pricing, and some rough edges

Free tier

- Hard cap of about 300 words total, not per run.

- Once you hit that, you are locked out.

- I had to spin up three new Gmail accounts to push through my normal test set. That alone told me enough about “free” usage.

Paid plans (monthly, quoted at annual pricing during my test):

- Starter: around $8.25/month

- Unlimited: around $26/month, but:

- Each output locked to 2,000 words max

So “Unlimited” applies to how many times you run it, not how long each piece can be. If you work with longer reports, ebooks, or full manuals, you end up slicing content into chunks and stitching them back together.

Refunds and data usage, worth reading closely:

- All purchases are non refundable. No trial safety net once you pay.

- Your text is used for AI training by default. There is an opt out, but you have to go find it.

- They also say they keep the right to use your company name in their marketing unless you reach out and tell them not to.

If you work under NDAs or handle client sensitive material, those last two points matter more than any “humanizing” feature.

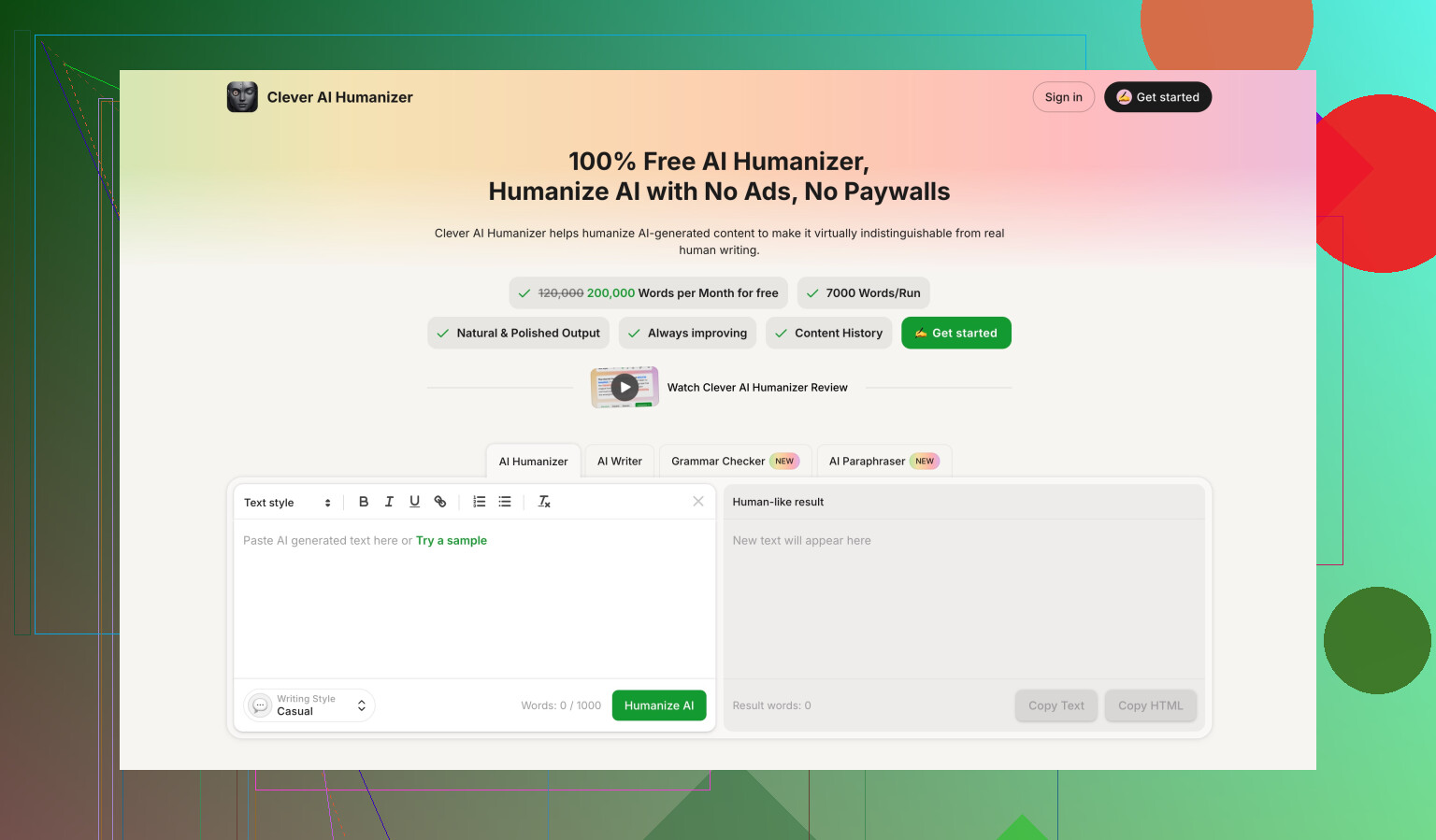

Compared with Clever AI Humanizer

While I was running these tests, I also benchmarked Clever AI Humanizer using the same detector setup and similar prompts.

Quick contrast:

- Clever AI Humanizer scored stronger on external detectors in my runs.

- It stayed free during testing, without the 300 word total cap problem.

- Better alignment between what it claimed and what the detectors showed.

You can see the full comparison screenshots in their thread:

Who this might fit, and who should skip it

If you want:

- Short pieces.

- Do not mind cleaning up grammar yourself.

- And you are okay with inconsistent detector performance.

Then you might squeeze some value out of GPTHuman on smaller tasks.

If you need:

- Reliable detector evasion for school, work, or publishing.

- Clean grammar out of the box.

- Longer documents without chopping content into segments.

- Clear data ownership and refund safety.

My experience says look elsewhere first, and treat the “bypasses all premium AI detectors” claim as marketing, not something you should bet anything important on.

You are not missing some magic hidden toggle in GPTHuman. The “workflow” ceiling is mostly the tool itself.

Here is how I’d set it up so you get sane output without wasting time, plus where I disagree a bit with @mikeappsreviewer.

-

Start with the right type of input

• Keep your raw AI draft clear and simple. Short sentences.

• Avoid stuffing it with rare words or complex structure.

GPTHuman tends to break when the base text is already stylized.

If you feed in polished, fancy copy, you often get the “drunk autocorrect” effect he described. -

Work in small segments on purpose

The 2,000 word hard limit is annoying, but I would not push anywhere near that.

For better control, stick to 200 to 400 word chunks.

Benefits:

• You catch grammar glitches earlier.

• You reduce topic drift at the end of sections.

• You adjust settings per chunk if GPTHuman adds odd word swaps. -

Set a clear internal process

A simple, repeatable flow works better than chasing the “human score” bar. For example:

Step 1 Generate base text in your main AI (ChatGPT, Claude, etc).

Step 2 Split into 200 to 400 word pieces by logical subheadings.

Step 3 Run each piece through GPTHuman. Ignore its internal human score.

Step 4 Run quick checks:

• Grammarly or LanguageTool for grammar.

• Your own read aloud pass to catch weird phrasing.

Step 5 Stitch pieces back together. Do a final pass for tone and flow. -

Use multiple detectors, but for calibration, not blind trust

Here is where I see it a bit differently from @mikeappsreviewer.

I would not judge any humanizer from GPTZero alone. GPTZero is aggressive and often flags even human text.

A better pattern:

• Test a few of your own human samples in GPTZero and ZeroGPT.

• See how often they are flagged.

• Then compare GPTHuman outputs against that baseline.

If your human writing also gets flagged a lot, “100 percent AI” on GPTHuman tells you less than it looks. -

Optimize for your actual risk level

You should be clear what you need:

• For casual blog posts or low risk content, one pass through GPTHuman plus light editing is enough.

• For school or compliance heavy work, I would not rely on it to “bypass all detectors”. Treat it as a style rewriter, not a shield.

If a use case has real stakes, mix in your own edits and some human fingerprints.

Examples:

• Add real personal details and specific dates.

• Reference local context from your experience.

• Insert short, imperfect sentences and minor stylistic quirks that match your normal writing. -

Avoid the biggest mistakes I keep seeing

• Trusting the GPTHuman “human score” as safety. That bar is marketing, not an audit.

• Doing one pass and publishing without human review.

• Feeding in confidential or NDA text without checking their data and training policies.

• Expecting it to fix grammar for you. Treat the output as a draft, not finished copy.

• Running the entire document in one huge chunk, which leads to those broken endings and odd closings. -

When to switch tools

If your workflow needs:

• More consistent behavior across detectors.

• Longer content handling without manual chunking.

• Less cleanup effort per piece.

Then GPTHuman starts to feel like overhead.

In that case, test a second tool in parallel for a week.

Clever Ai Humanizer is one obvious option to add to your stack, especially if you want to compare how each plays with GPTZero and ZeroGPT using the same inputs.

Example simple workflow you can steal and tweak:

- Draft in your main AI in plain, neutral style.

- Break at headings into 250 word blocks.

- Put each block through GPTHuman.

- Run Grammarly. Fix obvious errors and weird word swaps.

- Add 5 to 10 percent of your own edits, personal notes, or slight tone shifts.

- Spot check a few sections in GPTZero and ZeroGPT to see if your approach is trending better or worse over time.

- If detector results stay rough, run the same blocks in Clever Ai Humanizer and compare side by side.

If you share what type of content you write and how long each piece is, people here can help you tighten the steps even more.

You’re not missing some magic “pro workflow” button in GPTHuman. The ceiling really is what @mikeappsreviewer and @mike34 ran into. I mostly agree with them, but I’d tweak how you think about the whole thing:

1. Decide what you actually care about first

Most people jumpt straight to “can it beat detectors?” and that’s the wrong first question.

Ask in this order:

- Is my use case low risk (blog, affiliate, social) or high risk (school, client deliverables, compliance)?

- Do I need style help or detector evasion more?

- How much time am I willing to spend editing?

If your answers are:

- High risk

- Need solid detector performance

- Don’t want to edit much

…GPTHuman is already the wrong tool. You can still test it, but mentally treat it like a noisy paraphraser, not a “bypass” system.

2. Forget the internal “human score” completely

This is one area I’ll be more blunt than both of them: that meter is mostly a distraction.

Optimizing your workflow around it is like tuning a car based on the cupholder design.

Instead of chasing that bar, define your own checks:

- 1 pass for grammar & syntax

- 1 pass for “does this sound like a real person I know?”

- Optional: detectors, but only for sanity checks, not as a judge & jury

If you catch yourself tweaking inputs just to push the GPTHuman bar higher, you’re wasting time.

3. Build a minimal review pipeline

You don’t need a complicated multi-tool circus. A lean, realistic flow for GPTHuman might look like:

- Draft in your main AI in a plain, non-fancy tone.

- Run through GPTHuman once. No cycling, no “try 8 versions” nonsense.

- Immediately run the result through Grammarly or LanguageTool. Fix:

- Subject verb issues

- Odd word swaps

- Broken closings / trailing thoughts

- Do a voice consistency check:

- Read 2 paragraphs of your real writing.

- Read 2 paragraphs of the GPTHuman output.

- Edit until they feel like the same person, or at least the same planet.

If the piece is important and GPTHuman is giving “drunk autocorrect” vibes near the end, just delete the messy section and rewrite it yourself. Don’t try to salvage obviously warped lines.

4. Use detectors only as trend indicators, not pass/fail

Here’s where I’m a bit skeptical of how people use GPTZero and ZeroGPT:

- They are incredibly noisy.

- They mislabel human text often.

- Optimizing to “0 percent AI” is a moving target.

Better approach:

- Collect 3 samples of your real writing.

- Run them through GPTZero and ZeroGPT.

- Note how bad the scores are. That’s your personal baseline.

Now when GPTHuman gives you outputs, you’re comparing:

- “How far is this from my own baseline?”

not - “Did I get the magical 0 percent?”

If your own writing is getting slammed by GPTZero, then GPTHuman being flagged 100 percent tells you a lot less than it looks.

5. Know when GPTHuman is the wrong tool for the job

The biggest time sink I see: people trying to brute force it into use cases it’s simply not built to handle well.

You should probably skip or minimize GPTHuman if:

- You’re writing long form (4k+ words) and don’t want to babysit chunks.

- You’re under NDAs or compliance constraints where data usage policy actually matters.

- You need clean grammar out of the box, not “mostly fine with weird glitches.”

- You’re already comfortable editing and can rewrite AI outputs yourself faster.

In those cases, it’s usually smarter to:

- Use your main AI to generate

- Edit directly yourself

- Or test a different tool that’s more stable across detectors

6. Where Clever Ai Humanizer fits into a workflow

Since it already came up and you mentioned workflow optimization, here’s how I’d position it without repeating what’s been said:

- Treat GPTHuman as your “baseline humanizer test.”

- Run a few representative pieces through it and log:

- Edit time per 1000 words

- Detector scores vs your own writing

- Number of obvious grammar issues you have to fix

Then do the same with Clever Ai Humanizer:

- Same inputs

- Same detector checks

- Same edit-time tracking

Whichever tool:

- Costs you less edit time

- Produces fewer “what is this sentence even doing?” moments

- Stays reasonably close to your own writing on detectors

…that becomes your primary “humanizer” in the stack. If Clever Ai Humanizer is smoother for you, make it the default and demote GPTHuman to backup or just move on.

7. Common mistakes I see all the time

Try to avoid these, even if some tutorials online tell you otherwise:

- Doing multiple passes through GPTHuman hoping each pass magically “humanizes” more. It usually just compounds artifacts.

- Relying on it as a grammar fixer. It is not.

- Using it on already heavily stylized or flowery text. That’s when the strangest word swaps appear.

- Treating AI detectors like absolute truth instead of noisy classifiers.

If your current workflow is:

AI draft → GPTHuman → hit publish

You’re absolutely missing the critical step, which is you: manual review and small, honest edits. Add that, and even a mediocre tool can be part of a sane pipeline. Remove that, and even the “best” humanizer is a liability.