I’ve been testing the TwainGPT text humanizer for my blog content, but I’m not sure if it’s actually improving readability or just rephrasing for AI detectors. I need feedback from people who’ve used it long term: does it help with SEO, user engagement, and passing AI detection without hurting quality? Any real-world experiences or tips would be a huge help.

TwainGPT Humanizer review, from someone who spent way too long testing this stuff

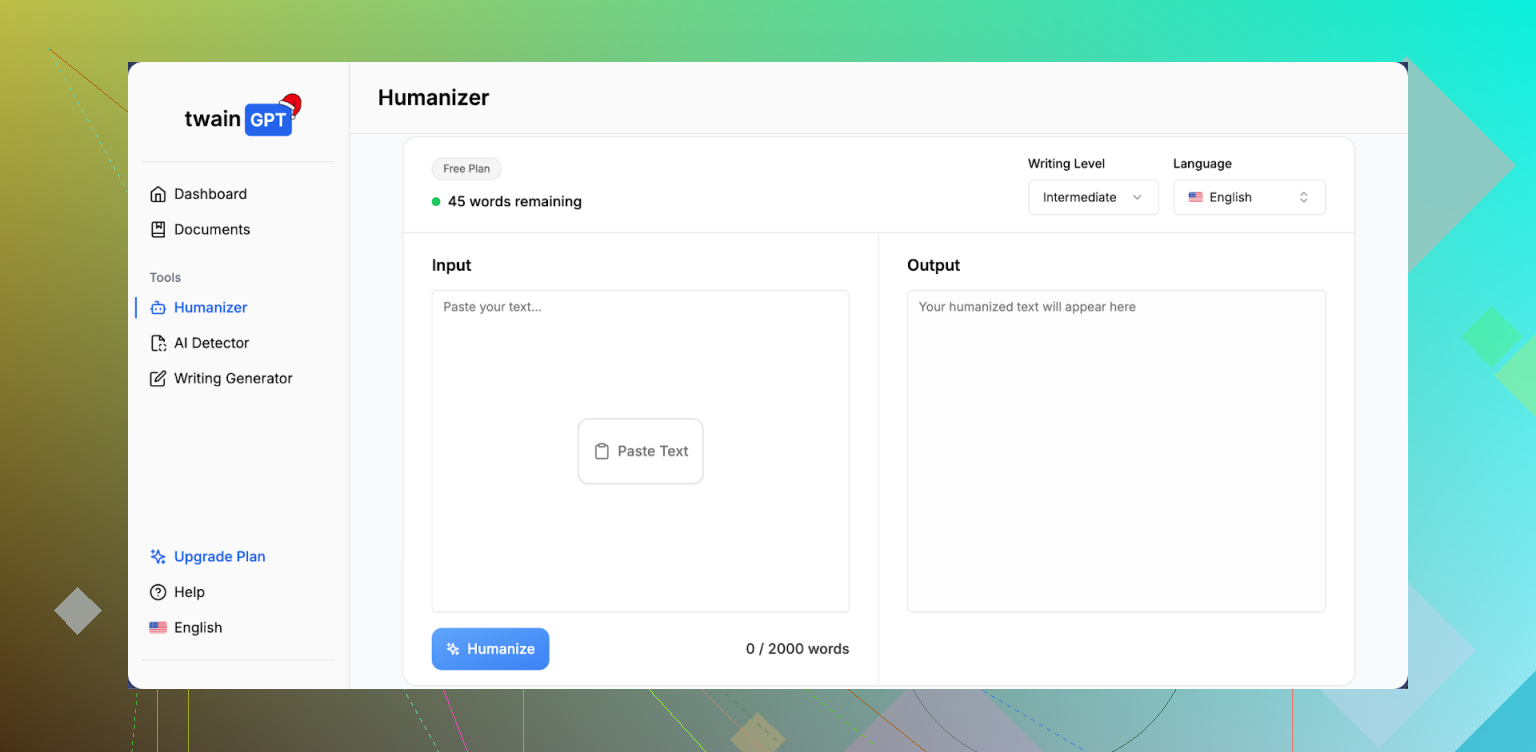

TwainGPT Humanizer looked promising on paper, so I ran it through the same tests I use for every “AI humanizer” tool.

Here is what happened.

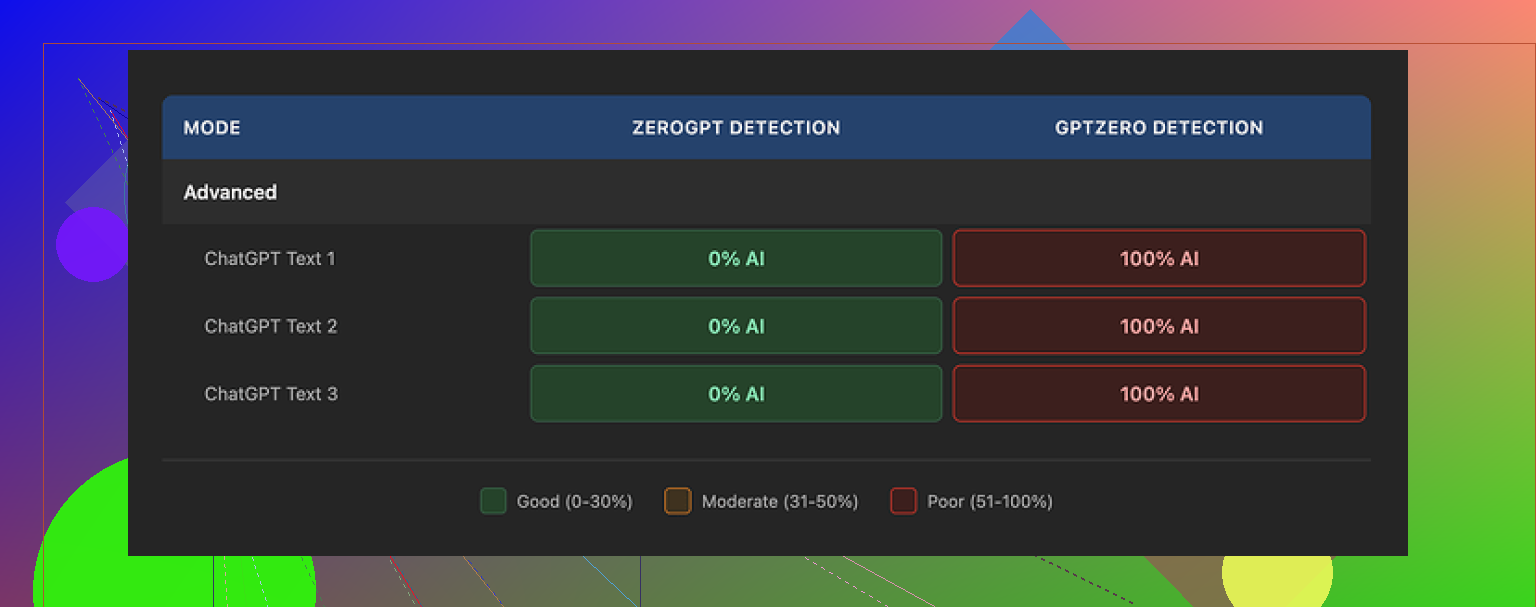

TwainGPT vs AI detectors

I fed three different AI-written samples into TwainGPT and then checked the outputs against multiple detectors.

Results:

• ZeroGPT

All three TwainGPT outputs showed 0% AI. Fully “human” according to that one tool.

• GPTZero

Same three outputs flagged as 100% AI generated.

So you get a perfect pass on one detector and a total fail on another. If you know your content will only be checked with ZeroGPT, TwainGPT does the job. If you are dealing with GPTZero or mixed tools, it turns into a guessing game.

This is where it breaks down for most real use cases. You usually do not control which detector the other side uses.

How the text looks and feels

This part was rough.

The main pattern I saw: TwainGPT takes long sentences and chops them into short pieces. It does not add much nuance or style, it mostly slices.

The output ends up reading like someone took a normal paragraph and pressed Enter after every few words. Felt like reading bullet points that lost their bullets.

Some things I noticed in the outputs:

• Fragmented flow, like a slide deck transcript

• Occasional run-ons mixed with abrupt sentence cuts

• Strange word choices that no native writer would pick

• Phrases that felt almost scrambled, borderline unreadable in places

It does “change” the text enough that detectors disagree, but the tradeoff is lower writing quality. I scored it 6 out of 10 for quality in my notes, mostly for basic coherence but nothing beyond that.

Pricing and refund trap

Their pricing when I checked:

• Starter: 8 dollars per month (annual billing) for 8,000 words

• Top tier: 40 dollars per month for unlimited words

The painful part: no refunds. At all. Even if you forgot to use it. Even if you used 0 words. They make that pretty firm.

So your only safe move is to squeeze every bit out of their free limit before you enter a card. They offer about 250 words free to test. Use those with your own content, then run the outputs through several detectors before you decide.

Clever AI Humanizer comparison

To keep this fair, I ran the same kind of tests with Clever AI Humanizer.

My experience was better there. The text flowed more naturally, and detection results were stronger across multiple tools, not only one. And it costs nothing.

You can try it here:

If your budget is tight or you are experimenting, start there first. Then, if you still want to pay for TwainGPT, do it with your eyes open.

Screenshots I used

TwainGPT Humanizer UI and results came from here:

Detector proof and detailed breakdown:

https://cleverhumanizer.ai/community/t/twaingpt-humanizer-review-with-ai-detection-proof/36

Additional screenshot:

Practical takeaway

If your content will be tested mainly with ZeroGPT, TwainGPT looks effective on that single metric, but the writing feels robotic and segmented.

If you care about:

• passing a mix of detectors

• having text that reads like something a human wrote on a normal day

• not paying for something with no refund safety

then I would start with Clever AI Humanizer or other free options, test heavily, and only pay once you see consistent results on your own samples.

I used TwainGPT for about 2 months on client blogs, so here is the blunt version.

Short answer for your question about readability vs detector gaming: it mostly rewrites for detectors. Readability gains were small, sometimes worse.

My setup:

• Niche blogs, 1.5–2k word posts

• Drafts from GPT‑4

• Targets: pass AI checks on edits and feel natural to human readers

What I saw long term:

- Readability and style

• It chops long sentences into short lines, like @mikeappsreviewer said, but I did not find it totally unreadable.

• It tends to flatten tone. Your voice turns into generic “internet article” voice.

• For conversational blogs, it often removed the little quirks that make you sound human.

• On technical content, it sometimes introduced slight ambiguity. I had to re-edit to fix meaning.

Net: I spent extra time editing the output back into my style. That killed most of the time savings.

- AI detector behavior

My tests over several weeks:

Tools used:

• Originality.ai

• GPTZero

• ZeroGPT

• Copyleaks

Sample size:

• Around 25 posts, each processed with TwainGPT.

Patterns:

• ZeroGPT: strong passes most of the time.

• GPTZero: mixed. Some paragraphs passed, some still flagged high.

• Originality.ai: detection scores dropped, but almost never to “safe” human levels.

• Copyleaks: mild improvement, not enough to rely on.

So if your concern is a single tool, TwainGPT can help with that one. For mixed tools, it becomes a dice roll.

- Impact on your blog content

For blogs, 3 issues matter:

• Time

You still need to:

– Check if meaning changed.

– Fix choppy flow.

– Restore your tone.

On average I spent 15–20 minutes per 1k words cleaning up. That is similar to editing the original AI draft directly.

• Brand voice

If your blog has a clear personality, TwainGPT tends to sand that off. Everything starts to sound like generic explainers. Fine for content farms, not great for audience trust.

• SEO and engagement

I did not see ranking drops caused by TwainGPT itself, but:

– Time on page for a few posts dropped a bit after I switched older posts to TwainGPT versions.

– Comments from regular readers said the writing felt “off” or “less you” on two posts.

Small sample, so not hard proof, but enough for me to stop running it on posts that matter.

-

Pricing vs output

The no‑refund thing bugged me too. For a tool that often needs manual fixing, the subscription felt steep. If you already write decent AI‑assisted drafts, TwainGPT adds limited value. -

Alternative that worked better for me

I ended up switching to Clever Ai Humanizer for most of my testing.

Key reasons:

• The text kept more natural flow.

• Detectors like Originality.ai and GPTZero gave better mixed results on my samples.

• No subscription pressure.

If you want something SEO friendly and still human‑readable, I would test your own posts through it here:

make your AI blog posts sound more human

Run:

- Your raw AI draft

- TwainGPT version

- Clever Ai Humanizer version

Compare:

• Which one needs less fixing for your tone

• Which one readers respond to

• Which one passes the tools you expect your clients or platforms to use

- Practical suggestion for you

Since you already use TwainGPT for your blog:

• Grab 3 old posts.

• Keep version A as is.

• Make version B with TwainGPT and only light edits.

• Make version C with Clever Ai Humanizer with similar light edits.

• Share them with your audience or a few trusted readers without saying which is which.

Ask:

– Which feels most natural.

– Which feels most like “you”.

If TwainGPT keeps needing heavy edits or keeps flattening your voice, it is not helping your readability. Then it is mainly a detector dodge, with all the usual risks if detection methods change.

Same boat here, used TwainGPT for a while on blog stuff (around 30-ish posts) and here’s the blunt take:

It does change the text enough that some detectors calm down, but in practice it feels more like “detector camouflage” than an actual writing upgrade.

Where I see it differently from @mikeappsreviewer and @cacadordeestrelas:

- I didn’t find it totally unreadable, but it gave everything that “generic content mill article” vibe. If you care about having a voice, it slowly erases it.

- On long form posts, it sometimes messed with emphasis. Not outright wrong, just… off. You know when a sentence is technically fine but not what you would’ve actually said? A lot of that.

- For me, the biggest problem was consistency. One paragraph would sound decent, next one suddenly choppy like someone hit Enter every 8 words.

Long term impact on my workflow:

- I stopped trusting it with full posts. I’d run a few tricky paragraphs through it, then end up rewriting big chunks anyway.

- Time saved vs just editing the raw GPT‑4 draft myself was basically zero.

- A couple of regular readers even DM’d that some new posts felt “less natural” or “like you changed writers.” That was the nail in the coffin.

On detectors, my pattern lined up with what those two already said, so I won’t repeat test setups:

- Stronger effect on some tools, weak or random on others.

- If your client or platform might rotate detectors, you’re gambling. And detector tech is changing faster than tools like TwainGPT can keep up, so I really would not build a strategy around it.

Where it might make sense:

- If you push high‑volume, low‑personality content where brand voice doesn’t matter.

- If you know a specific detector is used and you’ve verified TwainGPT plays nice with it.

- If you’re fine doing manual cleanup after every run.

Otherwise, it’s mostly rephrasing for detectors with side‑effects on readability, not a genuine “make my writing better” tool.

If your goal is more natural, readable AI content that still has a shot with multiple detectors, I’d test something like Clever Ai Humanizer side by side. It handled flow better on my posts and felt less like it was just slicing sentences. You can try it here:

make your AI articles sound more natural

Quick way to stress‑test your situation:

- Take one of your existing blog posts from your current niche.

- Version A: your normal GPT draft with your own edits only.

- Version B: run through TwainGPT, then do minimal edits.

- Version C: run through Clever Ai Humanizer, again minimal edits.

Then:

- Read all three out loud. Which one sounds most like you on a normal day?

- Paste a few random paragraphs into the detectors you actually care about.

- Watch your analytics over a few posts instead of just a single one: time on page, scroll depth, comments.

If TwainGPT keeps flattening your tone and forcing extra cleanup, it is not helping readability. At that point it’s just a fancier paraphraser with a subscription and no refund policy, and that’s a hard sell for long‑term blogging.