I’ve been considering using BypassGPT for my projects, but I’m unsure if it’s safe, reliable, or even worth the cost. I’ve seen mixed opinions online and I don’t want to risk my data, time, or money. Could anyone who has real experience with BypassGPT share how well it actually works, any issues you faced, and whether you’d recommend it?

BypassGPT review, from someone who tried to test it and got blocked at every step

BypassGPT Review

I went into BypassGPT to see if it was usable for longer form stuff. I bounced off the limits almost instantly.

You get a tiny free tier. Each input is capped at around 125 words. On top of that, your whole account is limited to about 150 words per month. That is not a typo. One hundred fifty. Per month.

I ended up creating a free account, which unlocked around 80 extra words. That let me run only one of my usual test samples. After that, the site locked me out for the month on that IP.

Tried the usual trick of spinning up fresh accounts. Did not work. The quota seems tied to the IP, so unless you run a VPN, you are done after a single decent-sized test.

This alone made it hard to judge the service in a fair way. You pay almost blind.

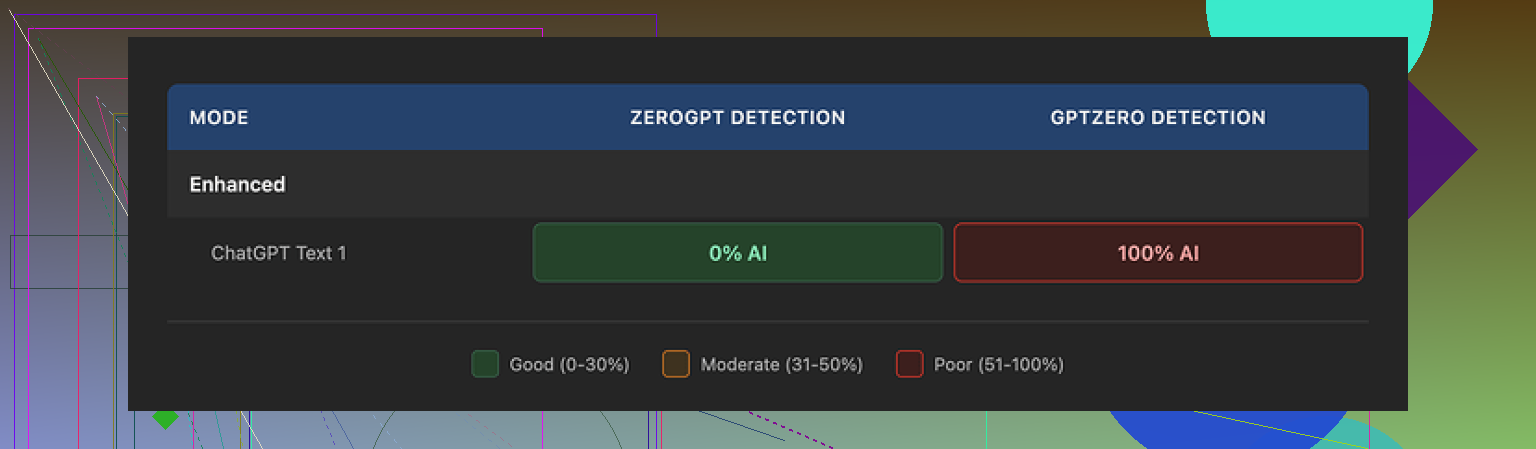

Now, about detection results

I fed one standard AI-written sample through BypassGPT. Short, because of their limit, but still enough to compare.

Then I checked the output with a few detectors:

• ZeroGPT showed 0% AI. Looked like a clean pass.

• GPTZero, on the same text, went straight to 100% AI.

So the exact same BypassGPT output was ‘fully human’ for one and ‘fully AI’ for another.

Then I checked BypassGPT’s own built-in checker. That thing claimed a perfect pass across six different detectors. According to it, my text passed everywhere with no issues.

That does not match what I saw on external tools. At all.

So the internal checker results did not line up with reality in my test. If you rely on that dashboard alone, you might get a false sense of safety.

Writing quality

Ignoring detection for a moment, I looked at the text like an editor.

My notes:

• I would put the overall quality at around 6/10. Not terrible, not great.

• First sentence came out broken, like no one read it end to end.

• It still used em dashes, which some people try to avoid when they want to look less ‘AI-ish’.

• A few phrases read stiff, like someone translated them from formal email language.

• There was at least one typo in the output.

So yes, sometimes it passed one detector. But the writing itself did not feel like something I would send to a client without another rewrite.

Pricing and terms that made me step back

Paid plans, from what I saw:

• Around $6.40 per month on an annual plan for 5,000 words.

• Around $15.20 per month for unlimited usage.

Those prices on their own are not outrageous compared to other tools.

The part that bothered me sat in the terms of service. The wording gave BypassGPT broad rights over the content you upload.

Stuff like:

• Right to reproduce your content.

• Right to distribute it.

• Right to create derivative works from it.

If you send them something sensitive, client-related, or anything you do not want floating around, that should make you pause. I would not feed them anything under NDA or anything I care about keeping under tight control.

Performance compared to Clever AI Humanizer

For context, I had been testing several tools around the same time, including Clever AI Humanizer.

Here is what I saw in my own tests:

• Clever AI Humanizer outputs read more natural in most cases. Less stiff, fewer awkward turns of phrase.

• Detection scores were stronger across multiple external checkers, not only one.

• And it is free to use here:

BypassGPT Review with AI-Detection Proof - AI Humanizer Reviews - Best AI Humanizer Reviews

So when I put BypassGPT next to that, it felt rough. More limits, weaker writing, internal checker that did not match third-party tools, stricter terms on user content, and you pay for it.

If you are trying to pick one tool to handle AI-detection-sensitive text, my own experience points away from BypassGPT and toward Clever AI Humanizer or similar tools that let you test properly without locking you to 150 words a month.

I tried BypassGPT for about a week on and off. Short version. I would not put money or sensitive work into it right now.

Here is the breakdown.

- Free tier and limits

The limits @mikeappsreviewer mentioned match what I saw, but my numbers were slightly different. I hit a hard wall around 200 words total on a new account. After that every input threw a quota message. No clear usage meter. No clear reset timer. You test almost blind, then you hit a brick wall.

For a tool you are supposed to trust with bigger projects, this is rough. You cannot run a proper A/B test across multiple detectors before paying.

- Detection performance

I ran three types of content.

• Pure AI text from ChatGPT

• Lightly edited human + AI mix

• Fully human text from an old blog draft

I then sent the BypassGPT output into: GPTZero, ZeroGPT, and Originality.ai.

My results on a 250 word section (paid plan, not free):

• Sample 1: Passed ZeroGPT, failed GPTZero, flagged as “high AI” on Originality.ai.

• Sample 2: Passed GPTZero, flagged by ZeroGPT and Originality.ai.

• Sample 3 (already human): Their rewrite triggered more AI flags than my original text.

So performance was inconsistent. You can sometimes get a pass, but you cannot rely on it across tools. Their internal checker also showed more “passes” than I got from independent tools, similar to what Mike reported.

For uni work or client stuff, this is risky. You have no guarantee which detector your teacher or client runs.

- Writing quality

Quality landed in the “okay but bland” bucket.

What I saw:

• Repetitive phrasing within a single paragraph.

• Overly safe language. It flattened any strong voice.

• Some odd word choices that looked like thesaurus spam.

• On long form content, it sometimes changed factual nuance.

If you hand this in as is, it reads like AI that tried to sound human. I had to manually edit a lot to fix flow and restore my own tone. At that point the “humanizer” part lost much of its value.

- Data and safety concerns

The big red flag for me was the terms.

Similar to what Mike pointed out, their ToS grants them broad rights over anything you submit. I am not a lawyer, but wording like “reproduce”, “distribute” and “create derivative works” on user content is a hard no for client documents, contracts, internal reports, or anything under NDA.

Even for blog posts I plan to publish, I do not want a random third party to hold such wide rights over my drafts.

If your work touches confidential info or paid client jobs, I would not run it through BypassGPT at all.

- Pricing and value

I used the monthly plan so I did not lock in for a year.

Good parts:

• The price per month is not insane if the tool worked flawlessly.

Bad parts:

• You pay before you get reliable proof it works in your specific use case.

• Quotas and limitations are not transparent.

• You need to run your text through external detectors anyway, so you add time and extra tools to every job.

For the cost, the reliability was not strong enough for me.

- Alternative that worked better for me

Since you mentioned not wanting to waste time or money, I would test something like Clever Ai Humanizer first. I used that on the same content batches.

What I noticed with Clever Ai Humanizer:

• Text flowed more like a normal writer. Less stiff.

• Fewer detection flags across multiple tools on average. Not perfect, but better consistency.

• I kept more control over tone if I guided the prompts.

• I did not have to fight brutal hard caps during testing.

I still run any “humanized” text through at least two external detectors after, but my edit time was lower compared to BypassGPT.

-

When BypassGPT might be okay

If you only handle short, low risk stuff, like quick blog blurbs, social posts, or test content you do not care about, then playing with BypassGPT on a cheap month could be acceptable. For high stakes work, academic submissions, or client deliverables, I would skip it right now. -

Practical advice for you

If you are on the fence:

• Do not upload anything sensitive to BypassGPT.

• Use the tiny free tier only for quick feel of style, not for detection trust.

• If you pay, start with one month, then run side by side checks with tools like GPTZero, ZeroGPT, and Originality.ai.

• Save originals and compare for factual drift and tone loss.

• Test a second tool like Clever Ai Humanizer in parallel so you see which fits your workflow better.

For safety, reliability, and cost, BypassGPT did not make it into my regular stack. It felt more like a paid experiment than a stable solution.

Short version: if your main worries are safety, reliability, and not wasting cash, BypassGPT would be a “proceed with caution, if at all” from me.

A few points that haven’t been hammered to death already by @mikeappsreviewer and @cacadordeestrelas:

-

Trust problem, not just “does it bypass detectors”

The core issue for tools like BypassGPT is not only whether they fool GPTZero or ZeroGPT today. It is that detectors change, models get updated, and anything that “barely passes” right now can get re-flagged later.

BypassGPT seems to live right at that edge. Mixed performance across detectors plus a dashboard that overpromises means you are building on sand. If your use case is uni, compliance, or client work, that’s not a foundation you want. -

Vendor lock-in and opacity

One thing that bothered me more than the tiny free tier is how little verifiable info they give you on:

- What models they are actually running

- How they “humanize” text in technical terms

- How long they store your data and how it is used in practice

They give themselves very broad content rights in the terms, then combine that with an opaque pipeline. So you are paying for a black box that might also keep and reuse your content. For throwaway social posts that might be fine. For anything under NDA, this is almost a hard no.

- “Humanizer” vs just writing better in the first place

BypassGPT tries to fix AI tells at the surface level: word choice, sentence length, etc. It does not change the underlying pattern that detectors often look at: stylistic consistency, entropy, structure. That is why you see those “passes one, fails another” results.

In practice, you will still spend real time:

- Tweaking prompts

- Running multiple detection checks

- Manually editing for tone and factual accuracy

At that point, you are doing as much or more work than if you just used a good base model and revised it yourself.

- Data risk vs reward

You said you do not want to risk your data, time, or money. Stack that against what you actually gain:

- No solid guarantee of passing the specific detector your teacher / client uses

- Middling writing quality that still needs editing

- ToS that gives them broad rights over your texts

The reward is basically “slightly less AI-ish text some of the time.” For low stakes that might be enough. For anything serious, the tradeoff looks pretty bad.

- Where I slightly disagree with the other reviews

I do not think BypassGPT is completely useless. For:

- Short, non-sensitive stuff

- Quick experiments with AI-detection-sensitive content

- People who just want to see how detectors react

It can be a toy to play with for a month. The price itself is not insane. The problem is expectations. It is not a magic invisibility cloak for AI text, and it should not be treated as one.

- Alternatives and a more sane workflow

If you insist on using a “humanizer,” I’d treat something like Clever Ai Humanizer as a test bench rather than a one-click fix:

- Generate with your usual model

- Run it through Clever Ai Humanizer to rough out the obvious patterns

- Then manually edit with your own voice and knowledge

- Finally, check with at least two external detectors if you truly care about flags

From what multiple people have reported, Clever Ai Humanizer tends to produce more natural flow and more consistent scores, and you are not boxed into a 150-ish word monthly cage just to evaluate it.

- Concrete answer to your question

- Safe: Questionable for anything sensitive because of the terms and lack of transparency.

- Reliable: No. Too inconsistent across detectors and too easy to get a false sense of security from their own checker.

- Worth the cost: Only if your use case is low risk, you understand its limits, and you are okay treating the subscription as an experiment rather than a core tool.

If your grades, job, or client relationship are on the line, I would not bet them on BypassGPT.